mirror of

https://github.com/openfaas/faasd.git

synced 2025-06-18 12:06:36 +00:00

Compare commits

49 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 35e017b526 | |||

| e54da61283 | |||

| 84353d0cae | |||

| e33a60862d | |||

| 7b67ff22e6 | |||

| 19abc9f7b9 | |||

| 480f566819 | |||

| cece6cf1ef | |||

| 22882e2643 | |||

| 667d74aaf7 | |||

| 9dcdbfb7e3 | |||

| 3a9b81200e | |||

| 734425de25 | |||

| 70e7e0d25a | |||

| be8574ecd0 | |||

| a0110b3019 | |||

| 87c71b090f | |||

| dc8667d36a | |||

| 137d199cb5 | |||

| 560c295eb0 | |||

| 93325b713e | |||

| 2307fc71c5 | |||

| 853830c018 | |||

| 262770a0b7 | |||

| 0efb6d492f | |||

| 27cfe465ca | |||

| d6c4ebaf96 | |||

| e9d1423315 | |||

| 4bca5c36a5 | |||

| 10e7a2f07c | |||

| 4775a9a77c | |||

| e07186ed5b | |||

| 2454c2a807 | |||

| 8bd2ba5334 | |||

| c379b0ebcc | |||

| 226a20c362 | |||

| 02c9dcf74d | |||

| 0b88fc232d | |||

| fcd1c9ab54 | |||

| 592f3d3cc0 | |||

| b06364c3f4 | |||

| 75fd07797c | |||

| 65c2cb0732 | |||

| 44df1cef98 | |||

| 881f5171ee | |||

| 970015ac85 | |||

| 283e8ed2c1 | |||

| d49011702b | |||

| eb369fbb16 |

@ -2,7 +2,7 @@ sudo: required

|

||||

language: go

|

||||

|

||||

go:

|

||||

- '1.12'

|

||||

- '1.13'

|

||||

|

||||

addons:

|

||||

apt:

|

||||

@ -10,6 +10,7 @@ addons:

|

||||

- runc

|

||||

|

||||

script:

|

||||

- make test

|

||||

- make dist

|

||||

- make prepare-test

|

||||

- make test-e2e

|

||||

@ -26,5 +27,5 @@ deploy:

|

||||

on:

|

||||

tags: true

|

||||

|

||||

env:

|

||||

- GO111MODULE=off

|

||||

env: GO111MODULE=off

|

||||

|

||||

|

||||

88

Gopkg.lock

generated

88

Gopkg.lock

generated

@ -13,7 +13,7 @@

|

||||

version = "v0.4.14"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:b28f788c0be42a6d26f07b282c5ff5f814ab7ad5833810ef0bc5f56fb9bedf11"

|

||||

digest = "1:f06a14a8b60a7a9cdbf14ed52272faf4ff5de4ed7c784ff55b64995be98ac59f"

|

||||

name = "github.com/Microsoft/hcsshim"

|

||||

packages = [

|

||||

".",

|

||||

@ -33,6 +33,7 @@

|

||||

"internal/timeout",

|

||||

"internal/vmcompute",

|

||||

"internal/wclayer",

|

||||

"osversion",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "9e921883ac929bbe515b39793ece99ce3a9d7706"

|

||||

@ -54,7 +55,7 @@

|

||||

version = "0.7.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:386ca0ac781cc1b630b3ed21725759770174140164b3faf3810e6ed6366a970b"

|

||||

digest = "1:cf83a14c8042951b0dcd74758fc32258111ecc7838cbdf5007717172cab9ca9b"

|

||||

name = "github.com/containerd/containerd"

|

||||

packages = [

|

||||

".",

|

||||

@ -102,6 +103,7 @@

|

||||

"remotes/docker/schema1",

|

||||

"rootfs",

|

||||

"runtime/linux/runctypes",

|

||||

"runtime/v2/logging",

|

||||

"runtime/v2/runc/options",

|

||||

"snapshots",

|

||||

"snapshots/proxy",

|

||||

@ -113,10 +115,11 @@

|

||||

version = "v1.3.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:7e9da25c7a952c63e31ed367a88eede43224b0663b58eb452870787d8ddb6c70"

|

||||

digest = "1:e4414857969cfbe45c7dab0a012aad4855bf7167c25d672a182cb18676424a0c"

|

||||

name = "github.com/containerd/continuity"

|

||||

packages = [

|

||||

"fs",

|

||||

"pathdriver",

|

||||

"syscallx",

|

||||

"sysx",

|

||||

]

|

||||

@ -167,6 +170,27 @@

|

||||

revision = "4cfb7b568922a3c79a23e438dc52fe537fc9687e"

|

||||

version = "v0.7.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:bcf36df8d43860bfde913d008301aef27c6e9a303582118a837c4a34c0d18167"

|

||||

name = "github.com/coreos/go-systemd"

|

||||

packages = ["journal"]

|

||||

pruneopts = "UT"

|

||||

revision = "d3cd4ed1dbcf5835feba465b180436db54f20228"

|

||||

version = "v21"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:92ebc9c068ab8e3fff03a58694ee33830964f6febd0130069aadce328802de14"

|

||||

name = "github.com/docker/cli"

|

||||

packages = [

|

||||

"cli/config",

|

||||

"cli/config/configfile",

|

||||

"cli/config/credentials",

|

||||

"cli/config/types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "99c5edceb48d64c1aa5d09b8c9c499d431d98bb9"

|

||||

version = "v19.03.5"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e495f9f1fb2bae55daeb76e099292054fe1f734947274b3cfc403ccda595d55a"

|

||||

name = "github.com/docker/distribution"

|

||||

@ -178,6 +202,30 @@

|

||||

pruneopts = "UT"

|

||||

revision = "0d3efadf0154c2b8a4e7b6621fff9809655cc580"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:10f9c98f627e9697ec23b7973a683324f1d901dd9bace4a71405c0b2ec554303"

|

||||

name = "github.com/docker/docker"

|

||||

packages = [

|

||||

"pkg/homedir",

|

||||

"pkg/idtools",

|

||||

"pkg/mount",

|

||||

"pkg/system",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "ea84732a77251e0d7af278e2b7df1d6a59fca46b"

|

||||

version = "v19.03.5"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:9f3f49b4e32d3da2dd6ed07cc568627b53cc80205c0dcf69f4091f027416cb60"

|

||||

name = "github.com/docker/docker-credential-helpers"

|

||||

packages = [

|

||||

"client",

|

||||

"credentials",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "54f0238b6bf101fc3ad3b34114cb5520beb562f5"

|

||||

version = "v0.6.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:0938aba6e09d72d48db029d44dcfa304851f52e2d67cda920436794248e92793"

|

||||

name = "github.com/docker/go-events"

|

||||

@ -185,6 +233,14 @@

|

||||

pruneopts = "UT"

|

||||

revision = "9461782956ad83b30282bf90e31fa6a70c255ba9"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e95ef557dc3120984bb66b385ae01b4bb8ff56bcde28e7b0d1beed0cccc4d69f"

|

||||

name = "github.com/docker/go-units"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "519db1ee28dcc9fd2474ae59fca29a810482bfb1"

|

||||

version = "v0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:fa6faf4a2977dc7643de38ae599a95424d82f8ffc184045510737010a82c4ecd"

|

||||

name = "github.com/gogo/googleapis"

|

||||

@ -227,14 +283,6 @@

|

||||

revision = "6c65a5562fc06764971b7c5d05c76c75e84bdbf7"

|

||||

version = "v1.3.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:582b704bebaa06b48c29b0cec224a6058a09c86883aaddabde889cd1a5f73e1b"

|

||||

name = "github.com/google/uuid"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "0cd6bf5da1e1c83f8b45653022c74f71af0538a4"

|

||||

version = "v1.1.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cbec35fe4d5a4fba369a656a8cd65e244ea2c743007d8f6c1ccb132acf9d1296"

|

||||

name = "github.com/gorilla/mux"

|

||||

@ -313,12 +361,13 @@

|

||||

version = "0.18.10"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:6f21508bd38feec0d440ca862f5adcb4c955713f3eb4e075b9af731e6ef258ba"

|

||||

digest = "1:7a20be0bdfb2c05a4a7b955cb71645fe2983aa3c0bbae10d6bba3e2dd26ddd0d"

|

||||

name = "github.com/openfaas/faas-provider"

|

||||

packages = [

|

||||

".",

|

||||

"auth",

|

||||

"httputil",

|

||||

"logs",

|

||||

"proxy",

|

||||

"types",

|

||||

]

|

||||

@ -375,15 +424,15 @@

|

||||

revision = "d98352740cb2c55f81556b63d4a1ec64c5a319c2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:2d9d06cb9d46dacfdbb45f8575b39fc0126d083841a29d4fbf8d97708f43107e"

|

||||

digest = "1:1314b5ef1c0b25257ea02e454291bf042478a48407cfe3ffea7e20323bbf5fdf"

|

||||

name = "github.com/vishvananda/netlink"

|

||||

packages = [

|

||||

".",

|

||||

"nl",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "a2ad57a690f3caf3015351d2d6e1c0b95c349752"

|

||||

version = "v1.0.0"

|

||||

revision = "f049be6f391489d3f374498fe0c8df8449258372"

|

||||

version = "v1.1.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@ -533,12 +582,19 @@

|

||||

"github.com/containerd/containerd/errdefs",

|

||||

"github.com/containerd/containerd/namespaces",

|

||||

"github.com/containerd/containerd/oci",

|

||||

"github.com/containerd/containerd/remotes",

|

||||

"github.com/containerd/containerd/remotes/docker",

|

||||

"github.com/containerd/containerd/runtime/v2/logging",

|

||||

"github.com/containerd/go-cni",

|

||||

"github.com/google/uuid",

|

||||

"github.com/coreos/go-systemd/journal",

|

||||

"github.com/docker/cli/cli/config",

|

||||

"github.com/docker/cli/cli/config/configfile",

|

||||

"github.com/docker/distribution/reference",

|

||||

"github.com/gorilla/mux",

|

||||

"github.com/morikuni/aec",

|

||||

"github.com/opencontainers/runtime-spec/specs-go",

|

||||

"github.com/openfaas/faas-provider",

|

||||

"github.com/openfaas/faas-provider/logs",

|

||||

"github.com/openfaas/faas-provider/proxy",

|

||||

"github.com/openfaas/faas-provider/types",

|

||||

"github.com/openfaas/faas/gateway/requests",

|

||||

|

||||

@ -43,5 +43,5 @@

|

||||

version = "0.14.0"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/google/uuid"

|

||||

version = "1.1.1"

|

||||

name = "github.com/docker/cli"

|

||||

version = "19.3.5"

|

||||

|

||||

15

Makefile

15

Makefile

@ -11,6 +11,10 @@ all: local

|

||||

local:

|

||||

CGO_ENABLED=0 GOOS=linux go build -o bin/faasd

|

||||

|

||||

.PHONY: test

|

||||

test:

|

||||

CGO_ENABLED=0 GOOS=linux go test -ldflags $(LDFLAGS) ./...

|

||||

|

||||

.PHONY: dist

|

||||

dist:

|

||||

CGO_ENABLED=0 GOOS=linux go build -ldflags $(LDFLAGS) -a -installsuffix cgo -o bin/faasd

|

||||

@ -25,8 +29,8 @@ prepare-test:

|

||||

sudo /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/$(CNI_VERSION)/cni-plugins-linux-$(ARCH)-$(CNI_VERSION).tgz | sudo tar -xz -C /opt/cni/bin

|

||||

sudo cp $(GOPATH)/src/github.com/alexellis/faasd/bin/faasd /usr/local/bin/

|

||||

cd $(GOPATH)/src/github.com/alexellis/faasd/ && sudo /usr/local/bin/faasd install

|

||||

sudo cp $(GOPATH)/src/github.com/openfaas/faasd/bin/faasd /usr/local/bin/

|

||||

cd $(GOPATH)/src/github.com/openfaas/faasd/ && sudo /usr/local/bin/faasd install

|

||||

sudo systemctl status -l containerd --no-pager

|

||||

sudo journalctl -u faasd-provider --no-pager

|

||||

sudo systemctl status -l faasd-provider --no-pager

|

||||

@ -37,9 +41,10 @@ prepare-test:

|

||||

.PHONY: test-e2e

|

||||

test-e2e:

|

||||

sudo cat /var/lib/faasd/secrets/basic-auth-password | /usr/local/bin/faas-cli login --password-stdin

|

||||

/usr/local/bin/faas-cli store deploy figlet --env write_timeout=1s --env read_timeout=1s

|

||||

sleep 2

|

||||

/usr/local/bin/faas-cli store deploy figlet --env write_timeout=1s --env read_timeout=1s --label testing=true

|

||||

sleep 5

|

||||

/usr/local/bin/faas-cli list -v

|

||||

/usr/local/bin/faas-cli describe figlet | grep testing

|

||||

uname | /usr/local/bin/faas-cli invoke figlet

|

||||

uname | /usr/local/bin/faas-cli invoke figlet --async

|

||||

sleep 10

|

||||

@ -47,3 +52,5 @@ test-e2e:

|

||||

/usr/local/bin/faas-cli remove figlet

|

||||

sleep 3

|

||||

/usr/local/bin/faas-cli list

|

||||

sleep 1

|

||||

/usr/local/bin/faas-cli logs figlet --follow=false | grep Forking

|

||||

|

||||

362

README.md

362

README.md

@ -1,16 +1,18 @@

|

||||

# faasd - serverless with containerd

|

||||

# faasd - serverless with containerd and CNI 🐳

|

||||

|

||||

[](https://travis-ci.com/alexellis/faasd)

|

||||

[](https://travis-ci.com/openfaas/faasd)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://www.openfaas.com)

|

||||

|

||||

|

||||

faasd is a Golang supervisor that bundles OpenFaaS for use with containerd instead of a container orchestrator like Kubernetes or Docker Swarm.

|

||||

faasd is the same OpenFaaS experience and ecosystem, but without Kubernetes. Functions and microservices can be deployed anywhere with reduced overheads whilst retaining the portability of containers and cloud-native tooling.

|

||||

|

||||

## About faasd:

|

||||

## About faasd

|

||||

|

||||

* faasd is a single Golang binary

|

||||

* faasd is multi-arch, so works on `x86_64`, armhf and arm64

|

||||

* faasd downloads, starts and supervises the core components to run OpenFaaS

|

||||

* is a single Golang binary

|

||||

* can be set-up and left alone to run your applications

|

||||

* is multi-arch, so works on Intel `x86_64` and ARM out the box

|

||||

* uses the same core components and ecosystem of OpenFaaS

|

||||

|

||||

|

||||

|

||||

@ -18,51 +20,126 @@ faasd is a Golang supervisor that bundles OpenFaaS for use with containerd inste

|

||||

|

||||

## What does faasd deploy?

|

||||

|

||||

* faasd - itself, and its [faas-provider](https://github.com/openfaas/faas-provider)

|

||||

* [Prometheus](https://github.com/prometheus/prometheus)

|

||||

* [the OpenFaaS gateway](https://github.com/openfaas/faas/tree/master/gateway)

|

||||

* faasd - itself, and its [faas-provider](https://github.com/openfaas/faas-provider) for containerd - CRUD for functions and services, implements the OpenFaaS REST API

|

||||

* [Prometheus](https://github.com/prometheus/prometheus) - for monitoring of services, metrics, scaling and dashboards

|

||||

* [OpenFaaS Gateway](https://github.com/openfaas/faas/tree/master/gateway) - the UI portal, CLI, and other OpenFaaS tooling can talk to this.

|

||||

* [OpenFaaS queue-worker for NATS](https://github.com/openfaas/nats-queue-worker) - run your invocations in the background without adding any code. See also: [asynchronous invocations](https://docs.openfaas.com/reference/triggers/#async-nats-streaming)

|

||||

* [NATS](https://nats.io) for asynchronous processing and queues

|

||||

|

||||

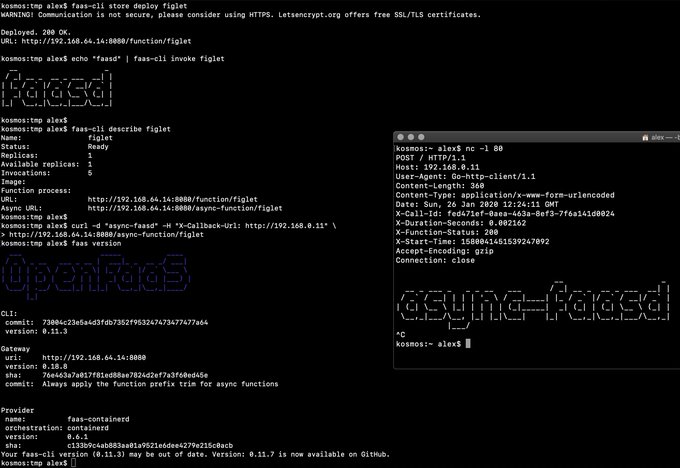

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) with faasd along with pre-packaged functions in the Function Store, or build your own with the template store.

|

||||

You'll also need:

|

||||

|

||||

### faasd supports:

|

||||

* [CNI](https://github.com/containernetworking/plugins)

|

||||

* [containerd](https://github.com/containerd/containerd)

|

||||

* [runc](https://github.com/opencontainers/runc)

|

||||

|

||||

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) along with pre-packaged functions from *the Function Store*, or build your own using any OpenFaaS template.

|

||||

|

||||

## Tutorials

|

||||

|

||||

### Get started on DigitalOcean, or any other IaaS

|

||||

|

||||

If your IaaS supports `user_data` aka "cloud-init", then this guide is for you. If not, then checkout the approach and feel free to run each step manually.

|

||||

|

||||

* [Build a Serverless appliance with faasd](https://blog.alexellis.io/deploy-serverless-faasd-with-cloud-init/)

|

||||

|

||||

### Run locally on MacOS, Linux, or Windows with Multipass.run

|

||||

|

||||

* [Get up and running with your own faasd installation on your Mac/Ubuntu or Windows with cloud-config](https://gist.github.com/alexellis/6d297e678c9243d326c151028a3ad7b9)

|

||||

|

||||

### Get started on armhf / Raspberry Pi

|

||||

|

||||

You can run this tutorial on your Raspberry Pi, or adapt the steps for a regular Linux VM/VPS host.

|

||||

|

||||

* [faasd - lightweight Serverless for your Raspberry Pi](https://blog.alexellis.io/faasd-for-lightweight-serverless/)

|

||||

|

||||

### Terraform for DigitalOcean

|

||||

|

||||

Automate everything within < 60 seconds and get a public URL and IP address back. Customise as required, or adapt to your preferred cloud such as AWS EC2.

|

||||

|

||||

* [Provision faasd 0.7.5 on DigitalOcean with Terraform 0.12.0](https://gist.github.com/alexellis/fd618bd2f957eb08c44d086ef2fc3906)

|

||||

|

||||

### A note on private repos / registries

|

||||

|

||||

To use private image repos, `~/.docker/config.json` needs to be copied to `/var/lib/faasd/.docker/config.json`.

|

||||

|

||||

If you'd like to set up your own private registry, [see this tutorial](https://blog.alexellis.io/get-a-tls-enabled-docker-registry-in-5-minutes/).

|

||||

|

||||

Beware that running `docker login` on MacOS and Windows may create an empty file with your credentials stored in the system helper.

|

||||

|

||||

Alternatively, use you can use the `registry-login` command from the OpenFaaS Cloud bootstrap tool (ofc-bootstrap):

|

||||

|

||||

```bash

|

||||

curl -sLSf https://raw.githubusercontent.com/openfaas-incubator/ofc-bootstrap/master/get.sh | sudo sh

|

||||

|

||||

ofc-bootstrap registry-login --username <your-registry-username> --password-stdin

|

||||

# (the enter your password and hit return)

|

||||

```

|

||||

The file will be created in `./credentials/`

|

||||

|

||||

### Logs for functions

|

||||

|

||||

You can view the logs of functions using `journalctl`:

|

||||

|

||||

```bash

|

||||

journalctl -t openfaas-fn:FUNCTION_NAME

|

||||

|

||||

|

||||

faas-cli store deploy figlet

|

||||

journalctl -t openfaas-fn:figlet -f &

|

||||

echo logs | faas-cli invoke figlet

|

||||

```

|

||||

|

||||

### Manual / developer instructions

|

||||

|

||||

See [here for manual / developer instructions](docs/DEV.md)

|

||||

|

||||

## Getting help

|

||||

|

||||

### Docs

|

||||

|

||||

The [OpenFaaS docs](https://docs.openfaas.com/) provide a wealth of information and are kept up to date with new features.

|

||||

|

||||

### Function and template store

|

||||

|

||||

For community functions see `faas-cli store --help`

|

||||

|

||||

For templates built by the community see: `faas-cli template store list`, you can also use the `dockerfile` template if you just want to migrate an existing service without the benefits of using a template.

|

||||

|

||||

### Workshop

|

||||

|

||||

[The OpenFaaS workshop](https://github.com/openfaas/workshop/) is a set of 12 self-paced labs and provides a great starting point

|

||||

|

||||

### Community support

|

||||

|

||||

An active community of almost 3000 users awaits you on Slack. Over 250 of those users are also contributors and help maintain the code.

|

||||

|

||||

* [Join Slack](https://slack.openfaas.io/)

|

||||

|

||||

## Backlog

|

||||

|

||||

### Supported operations

|

||||

|

||||

* `faas login`

|

||||

* `faas up`

|

||||

* `faas list`

|

||||

* `faas describe`

|

||||

* `faas deploy --update=true --replace=false`

|

||||

* `faas invoke --async`

|

||||

* `faas invoke`

|

||||

* `faas rm`

|

||||

* `faas login`

|

||||

* `faas store list/deploy/inspect`

|

||||

* `faas up`

|

||||

* `faas version`

|

||||

* `faas invoke --async`

|

||||

* `faas namespace`

|

||||

* `faas secret`

|

||||

* `faas logs`

|

||||

|

||||

Scale from and to zero is also supported. On a Dell XPS with a small, pre-pulled image unpausing an existing task took 0.19s and starting a task for a killed function took 0.39s. There may be further optimizations to be gained.

|

||||

|

||||

Other operations are pending development in the provider such as:

|

||||

|

||||

* `faas logs`

|

||||

* `faas secret`

|

||||

* `faas auth`

|

||||

* `faas auth` - supported for Basic Authentication, but OAuth2 & OIDC require a patch

|

||||

|

||||

### Pre-reqs

|

||||

|

||||

* Linux

|

||||

|

||||

PC / Cloud - any Linux that containerd works on should be fair game, but faasd is tested with Ubuntu 18.04

|

||||

|

||||

For Raspberry Pi Raspbian Stretch or newer also works fine

|

||||

|

||||

For MacOS users try [multipass.run](https://multipass.run) or [Vagrant](https://www.vagrantup.com/)

|

||||

|

||||

For Windows users, install [Git Bash](https://git-scm.com/downloads) along with multipass or vagrant. You can also use WSL1 or WSL2 which provides a Linux environment.

|

||||

|

||||

You will also need [containerd v1.3.2](https://github.com/containerd/containerd) and the [CNI plugins v0.8.5](https://github.com/containernetworking/plugins)

|

||||

|

||||

[faas-cli](https://github.com/openfaas/faas-cli) is optional, but recommended.

|

||||

|

||||

## Backlog

|

||||

## Todo

|

||||

|

||||

Pending:

|

||||

|

||||

@ -75,6 +152,7 @@ Pending:

|

||||

|

||||

Done:

|

||||

|

||||

* [x] Provide a cloud-config.txt file for automated deployments of `faasd`

|

||||

* [x] Inject / manage IPs between core components for service to service communication - i.e. so Prometheus can scrape the OpenFaaS gateway - done via `/etc/hosts` mount

|

||||

* [x] Add queue-worker and NATS

|

||||

* [x] Create faasd.service and faasd-provider.service

|

||||

@ -86,217 +164,3 @@ Done:

|

||||

* [x] Setup custom working directory for faasd `/var/lib/faasd/`

|

||||

* [x] Use CNI to create network namespaces and adapters

|

||||

|

||||

## Tutorial: Get started on armhf / Raspberry Pi

|

||||

|

||||

You can run this tutorial on your Raspberry Pi, or adapt the steps for a regular Linux VM/VPS host.

|

||||

|

||||

* [faasd - lightweight Serverless for your Raspberry Pi](https://blog.alexellis.io/faasd-for-lightweight-serverless/)

|

||||

|

||||

## Tutorial: Multipass & KVM for MacOS/Linux, or Windows (with cloud-config)

|

||||

|

||||

* [Get up and running with your own faasd installation on your Mac/Ubuntu or Windows with cloud-config](https://gist.github.com/alexellis/6d297e678c9243d326c151028a3ad7b9)

|

||||

|

||||

## Tutorial: Manual installation

|

||||

|

||||

### Get containerd

|

||||

|

||||

You have three options - binaries for PC, binaries for armhf, or build from source.

|

||||

|

||||

* Install containerd `x86_64` only

|

||||

|

||||

```sh

|

||||

export VER=1.3.2

|

||||

curl -sLSf https://github.com/containerd/containerd/releases/download/v$VER/containerd-$VER.linux-amd64.tar.gz > /tmp/containerd.tar.gz \

|

||||

&& sudo tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

|

||||

containerd -version

|

||||

```

|

||||

|

||||

* Or get my containerd binaries for armhf

|

||||

|

||||

Building containerd on armhf is extremely slow.

|

||||

|

||||

```sh

|

||||

curl -sSL https://github.com/alexellis/containerd-armhf/releases/download/v1.3.2/containerd.tgz | sudo tar -xvz --strip-components=2 -C /usr/local/bin/

|

||||

```

|

||||

|

||||

* Or clone / build / install [containerd](https://github.com/containerd/containerd) from source:

|

||||

|

||||

```sh

|

||||

export GOPATH=$HOME/go/

|

||||

mkdir -p $GOPATH/src/github.com/containerd

|

||||

cd $GOPATH/src/github.com/containerd

|

||||

git clone https://github.com/containerd/containerd

|

||||

cd containerd

|

||||

git fetch origin --tags

|

||||

git checkout v1.3.2

|

||||

|

||||

make

|

||||

sudo make install

|

||||

|

||||

containerd --version

|

||||

```

|

||||

|

||||

Kill any old containerd version:

|

||||

|

||||

```sh

|

||||

# Kill any old version

|

||||

sudo killall containerd

|

||||

sudo systemctl disable containerd

|

||||

```

|

||||

|

||||

Start containerd in a new terminal:

|

||||

|

||||

```sh

|

||||

sudo containerd &

|

||||

```

|

||||

#### Enable forwarding

|

||||

|

||||

> This is required to allow containers in containerd to access the Internet via your computer's primary network interface.

|

||||

|

||||

```sh

|

||||

sudo /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

```

|

||||

|

||||

Make the setting permanent:

|

||||

|

||||

```sh

|

||||

echo "net.ipv4.conf.all.forwarding=1" | sudo tee -a /etc/sysctl.conf

|

||||

```

|

||||

|

||||

### Hacking (build from source)

|

||||

|

||||

#### Get build packages

|

||||

|

||||

```sh

|

||||

sudo apt update \

|

||||

&& sudo apt install -qy \

|

||||

runc \

|

||||

bridge-utils

|

||||

```

|

||||

|

||||

You may find alternatives for CentOS and other distributions.

|

||||

|

||||

#### Install Go 1.13 (x86_64)

|

||||

|

||||

```sh

|

||||

curl -sSLf https://dl.google.com/go/go1.13.6.linux-amd64.tar.gz > go.tgz

|

||||

sudo rm -rf /usr/local/go/

|

||||

sudo mkdir -p /usr/local/go/

|

||||

sudo tar -xvf go.tgz -C /usr/local/go/ --strip-components=1

|

||||

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

|

||||

go version

|

||||

```

|

||||

|

||||

#### Or on Raspberry Pi (armhf)

|

||||

|

||||

```sh

|

||||

curl -SLsf https://dl.google.com/go/go1.13.6.linux-armv6l.tar.gz > go.tgz

|

||||

sudo rm -rf /usr/local/go/

|

||||

sudo mkdir -p /usr/local/go/

|

||||

sudo tar -xvf go.tgz -C /usr/local/go/ --strip-components=1

|

||||

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

|

||||

go version

|

||||

```

|

||||

|

||||

#### Install the CNI plugins:

|

||||

|

||||

* For PC run `export ARCH=amd64`

|

||||

* For RPi/armhf run `export ARCH=arm`

|

||||

* For arm64 run `export ARCH=arm64`

|

||||

|

||||

Then run:

|

||||

|

||||

```sh

|

||||

export ARCH=amd64

|

||||

export CNI_VERSION=v0.8.5

|

||||

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/${CNI_VERSION}/cni-plugins-linux-${ARCH}-${CNI_VERSION}.tgz | sudo tar -xz -C /opt/cni/bin

|

||||

```

|

||||

|

||||

Run or install faasd, which brings up the gateway and Prometheus as containers

|

||||

|

||||

```sh

|

||||

cd $GOPATH/src/github.com/alexellis/faasd

|

||||

go build

|

||||

|

||||

# Install with systemd

|

||||

# sudo ./faasd install

|

||||

|

||||

# Or run interactively

|

||||

# sudo ./faasd up

|

||||

```

|

||||

|

||||

#### Build and run `faasd` (binaries)

|

||||

|

||||

```sh

|

||||

# For x86_64

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.6.2/faasd" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# armhf

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.6.2/faasd-armhf" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# arm64

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.6.2/faasd-arm64" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

```

|

||||

|

||||

#### At run-time

|

||||

|

||||

Look in `hosts` in the current working folder or in `/var/lib/faasd/` to get the IP for the gateway or Prometheus

|

||||

|

||||

```sh

|

||||

127.0.0.1 localhost

|

||||

10.62.0.1 faasd-provider

|

||||

|

||||

10.62.0.2 prometheus

|

||||

10.62.0.3 gateway

|

||||

10.62.0.4 nats

|

||||

10.62.0.5 queue-worker

|

||||

```

|

||||

|

||||

The IP addresses are dynamic and may change on every launch.

|

||||

|

||||

Since faasd-provider uses containerd heavily it is not running as a container, but as a stand-alone process. Its port is available via the bridge interface, i.e. `openfaas0`

|

||||

|

||||

* Prometheus will run on the Prometheus IP plus port 8080 i.e. http://[prometheus_ip]:9090/targets

|

||||

|

||||

* faasd-provider runs on 10.62.0.1:8081, i.e. directly on the host, and accessible via the bridge interface from CNI.

|

||||

|

||||

* Now go to the gateway's IP address as shown above on port 8080, i.e. http://[gateway_ip]:8080 - you can also use this address to deploy OpenFaaS Functions via the `faas-cli`.

|

||||

|

||||

* basic-auth

|

||||

|

||||

You will then need to get the basic-auth password, it is written to `/var/lib/faasd/secrets/basic-auth-password` if you followed the above instructions.

|

||||

The default Basic Auth username is `admin`, which is written to `/var/lib/faasd/secrets/basic-auth-user`, if you wish to use a non-standard user then create this file and add your username (no newlines or other characters)

|

||||

|

||||

#### Installation with systemd

|

||||

|

||||

* `faasd install` - install faasd and containerd with systemd, this must be run from `$GOPATH/src/github.com/alexellis/faasd`

|

||||

* `journalctl -u faasd -f` - faasd service logs

|

||||

* `journalctl -u faasd-provider -f` - faasd-provider service logs

|

||||

|

||||

### Appendix

|

||||

|

||||

#### Links

|

||||

|

||||

https://github.com/renatofq/ctrofb/blob/31968e4b4893f3603e9998f21933c4131523bb5d/cmd/network.go

|

||||

|

||||

https://github.com/renatofq/catraia/blob/c4f62c86bddbfadbead38cd2bfe6d920fba26dce/catraia-net/network.go

|

||||

|

||||

https://github.com/containernetworking/plugins

|

||||

|

||||

https://github.com/containerd/go-cni

|

||||

|

||||

|

||||

@ -11,13 +11,14 @@ runcmd:

|

||||

- curl -sLSf https://github.com/containerd/containerd/releases/download/v1.3.2/containerd-1.3.2.linux-amd64.tar.gz > /tmp/containerd.tar.gz && tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

- curl -SLfs https://raw.githubusercontent.com/containerd/containerd/v1.3.2/containerd.service | tee /etc/systemd/system/containerd.service

|

||||

- systemctl daemon-reload && systemctl start containerd

|

||||

- systemctl enable containerd

|

||||

- /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

- mkdir -p /opt/cni/bin

|

||||

- curl -sSL https://github.com/containernetworking/plugins/releases/download/v0.8.5/cni-plugins-linux-amd64-v0.8.5.tgz | tar -xz -C /opt/cni/bin

|

||||

- mkdir -p /go/src/github.com/alexellis/

|

||||

- cd /go/src/github.com/alexellis/ && git clone https://github.com/alexellis/faasd

|

||||

- curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.7.0/faasd" --output "/usr/local/bin/faasd" && chmod a+x "/usr/local/bin/faasd"

|

||||

- cd /go/src/github.com/alexellis/faasd/ && /usr/local/bin/faasd install

|

||||

- mkdir -p /go/src/github.com/openfaas/

|

||||

- cd /go/src/github.com/openfaas/ && git clone https://github.com/openfaas/faasd

|

||||

- curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.1/faasd" --output "/usr/local/bin/faasd" && chmod a+x "/usr/local/bin/faasd"

|

||||

- cd /go/src/github.com/openfaas/faasd/ && /usr/local/bin/faasd install

|

||||

- systemctl status -l containerd --no-pager

|

||||

- journalctl -u faasd-provider --no-pager

|

||||

- systemctl status -l faasd-provider --no-pager

|

||||

|

||||

60

cmd/collect.go

Normal file

60

cmd/collect.go

Normal file

@ -0,0 +1,60 @@

|

||||

package cmd

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"context"

|

||||

"fmt"

|

||||

"io"

|

||||

"sync"

|

||||

|

||||

"github.com/containerd/containerd/runtime/v2/logging"

|

||||

"github.com/coreos/go-systemd/journal"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

func CollectCommand() *cobra.Command {

|

||||

return collectCmd

|

||||

}

|

||||

|

||||

var collectCmd = &cobra.Command{

|

||||

Use: "collect",

|

||||

Short: "Collect logs to the journal",

|

||||

RunE: runCollect,

|

||||

}

|

||||

|

||||

func runCollect(_ *cobra.Command, _ []string) error {

|

||||

logging.Run(logStdio)

|

||||

return nil

|

||||

}

|

||||

|

||||

// logStdio copied from

|

||||

// https://github.com/containerd/containerd/pull/3085

|

||||

// https://github.com/stellarproject/orbit

|

||||

func logStdio(ctx context.Context, config *logging.Config, ready func() error) error {

|

||||

// construct any log metadata for the container

|

||||

vars := map[string]string{

|

||||

"SYSLOG_IDENTIFIER": fmt.Sprintf("%s:%s", config.Namespace, config.ID),

|

||||

}

|

||||

var wg sync.WaitGroup

|

||||

wg.Add(2)

|

||||

// forward both stdout and stderr to the journal

|

||||

go copy(&wg, config.Stdout, journal.PriInfo, vars)

|

||||

go copy(&wg, config.Stderr, journal.PriErr, vars)

|

||||

// signal that we are ready and setup for the container to be started

|

||||

if err := ready(); err != nil {

|

||||

return err

|

||||

}

|

||||

wg.Wait()

|

||||

return nil

|

||||

}

|

||||

|

||||

func copy(wg *sync.WaitGroup, r io.Reader, pri journal.Priority, vars map[string]string) {

|

||||

defer wg.Done()

|

||||

s := bufio.NewScanner(r)

|

||||

for s.Scan() {

|

||||

if s.Err() != nil {

|

||||

return

|

||||

}

|

||||

journal.Send(s.Text(), pri, vars)

|

||||

}

|

||||

}

|

||||

@ -6,7 +6,7 @@ import (

|

||||

"os"

|

||||

"path"

|

||||

|

||||

systemd "github.com/alexellis/faasd/pkg/systemd"

|

||||

systemd "github.com/openfaas/faasd/pkg/systemd"

|

||||

"github.com/pkg/errors"

|

||||

|

||||

"github.com/spf13/cobra"

|

||||

|

||||

@ -9,29 +9,44 @@ import (

|

||||

"os"

|

||||

"path"

|

||||

|

||||

"github.com/alexellis/faasd/pkg/provider/config"

|

||||

"github.com/alexellis/faasd/pkg/provider/handlers"

|

||||

"github.com/containerd/containerd"

|

||||

bootstrap "github.com/openfaas/faas-provider"

|

||||

"github.com/openfaas/faas-provider/logs"

|

||||

"github.com/openfaas/faas-provider/proxy"

|

||||

"github.com/openfaas/faas-provider/types"

|

||||

"github.com/openfaas/faasd/pkg/cninetwork"

|

||||

faasdlogs "github.com/openfaas/faasd/pkg/logs"

|

||||

"github.com/openfaas/faasd/pkg/provider/config"

|

||||

"github.com/openfaas/faasd/pkg/provider/handlers"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

var providerCmd = &cobra.Command{

|

||||

func makeProviderCmd() *cobra.Command {

|

||||

var command = &cobra.Command{

|

||||

Use: "provider",

|

||||

Short: "Run the faasd faas-provider",

|

||||

RunE: runProvider,

|

||||

}

|

||||

Short: "Run the faasd-provider",

|

||||

}

|

||||

|

||||

func runProvider(_ *cobra.Command, _ []string) error {

|

||||

command.Flags().String("pull-policy", "Always", `Set to "Always" to force a pull of images upon deployment, or "IfNotPresent" to try to use a cached image.`)

|

||||

|

||||

command.RunE = func(_ *cobra.Command, _ []string) error {

|

||||

|

||||

pullPolicy, flagErr := command.Flags().GetString("pull-policy")

|

||||

if flagErr != nil {

|

||||

return flagErr

|

||||

}

|

||||

|

||||

alwaysPull := false

|

||||

if pullPolicy == "Always" {

|

||||

alwaysPull = true

|

||||

}

|

||||

|

||||

config, providerConfig, err := config.ReadFromEnv(types.OsEnv{})

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

log.Printf("faas-containerd starting..\tService Timeout: %s\n", config.WriteTimeout.String())

|

||||

log.Printf("faasd-provider starting..\tService Timeout: %s\n", config.WriteTimeout.String())

|

||||

|

||||

wd, err := os.Getwd()

|

||||

if err != nil {

|

||||

@ -52,7 +67,7 @@ func runProvider(_ *cobra.Command, _ []string) error {

|

||||

return fmt.Errorf("cannot write resolv.conf file: %s", writeResolvErr)

|

||||

}

|

||||

|

||||

cni, err := handlers.InitNetwork()

|

||||

cni, err := cninetwork.InitNetwork()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@ -71,21 +86,24 @@ func runProvider(_ *cobra.Command, _ []string) error {

|

||||

bootstrapHandlers := types.FaaSHandlers{

|

||||

FunctionProxy: proxy.NewHandlerFunc(*config, invokeResolver),

|

||||

DeleteHandler: handlers.MakeDeleteHandler(client, cni),

|

||||

DeployHandler: handlers.MakeDeployHandler(client, cni, userSecretPath),

|

||||

DeployHandler: handlers.MakeDeployHandler(client, cni, userSecretPath, alwaysPull),

|

||||

FunctionReader: handlers.MakeReadHandler(client),

|

||||

ReplicaReader: handlers.MakeReplicaReaderHandler(client),

|

||||

ReplicaUpdater: handlers.MakeReplicaUpdateHandler(client, cni),

|

||||

UpdateHandler: handlers.MakeUpdateHandler(client, cni, userSecretPath),

|

||||

UpdateHandler: handlers.MakeUpdateHandler(client, cni, userSecretPath, alwaysPull),

|

||||

HealthHandler: func(w http.ResponseWriter, r *http.Request) {},

|

||||

InfoHandler: handlers.MakeInfoHandler(Version, GitCommit),

|

||||

ListNamespaceHandler: listNamespaces(),

|

||||

SecretHandler: handlers.MakeSecretHandler(client, userSecretPath),

|

||||

LogHandler: logs.NewLogHandlerFunc(faasdlogs.New(), config.ReadTimeout),

|

||||

}

|

||||

|

||||

log.Printf("Listening on TCP port: %d\n", *config.TCPPort)

|

||||

bootstrap.Serve(&bootstrapHandlers, config)

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

return command

|

||||

}

|

||||

|

||||

func listNamespaces() func(w http.ResponseWriter, r *http.Request) {

|

||||

|

||||

@ -14,7 +14,12 @@ func init() {

|

||||

rootCommand.AddCommand(versionCmd)

|

||||

rootCommand.AddCommand(upCmd)

|

||||

rootCommand.AddCommand(installCmd)

|

||||

rootCommand.AddCommand(providerCmd)

|

||||

rootCommand.AddCommand(makeProviderCmd())

|

||||

rootCommand.AddCommand(collectCmd)

|

||||

}

|

||||

|

||||

func RootCommand() *cobra.Command {

|

||||

return rootCommand

|

||||

}

|

||||

|

||||

var (

|

||||

|

||||

113

cmd/up.go

113

cmd/up.go

@ -2,7 +2,6 @@ package cmd

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"io"

|

||||

"io/ioutil"

|

||||

"log"

|

||||

"os"

|

||||

@ -15,8 +14,8 @@ import (

|

||||

|

||||

"github.com/pkg/errors"

|

||||

|

||||

"github.com/alexellis/faasd/pkg"

|

||||

"github.com/alexellis/k3sup/pkg/env"

|

||||

"github.com/openfaas/faasd/pkg"

|

||||

"github.com/sethvargo/go-password/password"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

@ -27,32 +26,6 @@ var upCmd = &cobra.Command{

|

||||

RunE: runUp,

|

||||

}

|

||||

|

||||

// defaultCNIConf is a CNI configuration that enables network access to containers (docker-bridge style)

|

||||

var defaultCNIConf = fmt.Sprintf(`

|

||||

{

|

||||

"cniVersion": "0.4.0",

|

||||

"name": "%s",

|

||||

"plugins": [

|

||||

{

|

||||

"type": "bridge",

|

||||

"bridge": "%s",

|

||||

"isGateway": true,

|

||||

"ipMasq": true,

|

||||

"ipam": {

|

||||

"type": "host-local",

|

||||

"subnet": "%s",

|

||||

"routes": [

|

||||

{ "dst": "0.0.0.0/0" }

|

||||

]

|

||||

}

|

||||

},

|

||||

{

|

||||

"type": "firewall"

|

||||

}

|

||||

]

|

||||

}

|

||||

`, pkg.DefaultNetworkName, pkg.DefaultBridgeName, pkg.DefaultSubnet)

|

||||

|

||||

const containerSecretMountDir = "/run/secrets"

|

||||

|

||||

func runUp(_ *cobra.Command, _ []string) error {

|

||||

@ -80,10 +53,6 @@ func runUp(_ *cobra.Command, _ []string) error {

|

||||

return errors.Wrap(basicAuthErr, "cannot create basic-auth-* files")

|

||||

}

|

||||

|

||||

if makeNetworkErr := makeNetworkConfig(); makeNetworkErr != nil {

|

||||

return errors.Wrap(makeNetworkErr, "error creating network config")

|

||||

}

|

||||

|

||||

services := makeServiceDefinitions(clientSuffix)

|

||||

|

||||

start := time.Now()

|

||||

@ -147,6 +116,7 @@ func runUp(_ *cobra.Command, _ []string) error {

|

||||

log.Println(fileErr)

|

||||

return

|

||||

}

|

||||

|

||||

host := ""

|

||||

lines := strings.Split(string(fileData), "\n")

|

||||

for _, line := range lines {

|

||||

@ -203,7 +173,7 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

wd, _ := os.Getwd()

|

||||

|

||||

return []pkg.Service{

|

||||

pkg.Service{

|

||||

{

|

||||

Name: "basic-auth-plugin",

|

||||

Image: "docker.io/openfaas/basic-auth-plugin:0.18.10" + archSuffix,

|

||||

Env: []string{

|

||||

@ -213,11 +183,11 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

"pass_filename=basic-auth-password",

|

||||

},

|

||||

Mounts: []pkg.Mount{

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

@ -225,30 +195,30 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

Args: nil,

|

||||

},

|

||||

pkg.Service{

|

||||

{

|

||||

Name: "nats",

|

||||

Env: []string{""},

|

||||

Image: "docker.io/library/nats-streaming:0.11.2",

|

||||

Caps: []string{},

|

||||

Args: []string{"/nats-streaming-server", "-m", "8222", "--store=memory", "--cluster_id=faas-cluster"},

|

||||

},

|

||||

pkg.Service{

|

||||

{

|

||||

Name: "prometheus",

|

||||

Env: []string{},

|

||||

Image: "docker.io/prom/prometheus:v2.14.0",

|

||||

Mounts: []pkg.Mount{

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(wd, "prometheus.yml"),

|

||||

Dest: "/etc/prometheus/prometheus.yml",

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

},

|

||||

pkg.Service{

|

||||

{

|

||||

Name: "gateway",

|

||||

Env: []string{

|

||||

"basic_auth=true",

|

||||

"functions_provider_url=http://faas-containerd:8081/",

|

||||

"functions_provider_url=http://faasd-provider:8081/",

|

||||

"direct_functions=false",

|

||||

"read_timeout=60s",

|

||||

"write_timeout=60s",

|

||||

@ -262,18 +232,18 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

},

|

||||

Image: "docker.io/openfaas/gateway:0.18.8" + archSuffix,

|

||||

Mounts: []pkg.Mount{

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

},

|

||||

pkg.Service{

|

||||

{

|

||||

Name: "queue-worker",

|

||||

Env: []string{

|

||||

"faas_nats_address=nats",

|

||||

@ -288,11 +258,11 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

},

|

||||

Image: "docker.io/openfaas/queue-worker:0.9.0",

|

||||

Mounts: []pkg.Mount{

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

@ -301,56 +271,3 @@ func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

func makeNetworkConfig() error {

|

||||

netConfig := path.Join(pkg.CNIConfDir, pkg.DefaultCNIConfFilename)

|

||||

log.Printf("Writing network config...\n")

|

||||

|

||||

if !dirExists(pkg.CNIConfDir) {

|

||||

if err := os.MkdirAll(pkg.CNIConfDir, 0755); err != nil {

|

||||

return fmt.Errorf("cannot create directory: %s", pkg.CNIConfDir)

|

||||

}

|

||||

}

|

||||

|

||||

if err := ioutil.WriteFile(netConfig, []byte(defaultCNIConf), 644); err != nil {

|

||||

return fmt.Errorf("cannot write network config: %s", pkg.DefaultCNIConfFilename)

|

||||

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func dirEmpty(dirname string) (b bool) {

|

||||

if !dirExists(dirname) {

|

||||

return

|

||||

}

|

||||

|

||||

f, err := os.Open(dirname)

|

||||

if err != nil {

|

||||

return

|

||||

}

|

||||

defer func() { _ = f.Close() }()

|

||||

|

||||

// If the first file is EOF, the directory is empty

|

||||

if _, err = f.Readdir(1); err == io.EOF {

|

||||

b = true

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

func dirExists(dirname string) bool {

|

||||

exists, info := pathExists(dirname)

|

||||

if !exists {

|

||||

return false

|

||||

}

|

||||

|

||||

return info.IsDir()

|

||||

}

|

||||

|

||||

func pathExists(path string) (bool, os.FileInfo) {

|

||||

info, err := os.Stat(path)

|

||||

if os.IsNotExist(err) {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

return true, info

|

||||

}

|

||||

|

||||

309

docs/DEV.md

Normal file

309

docs/DEV.md

Normal file

@ -0,0 +1,309 @@

|

||||

## Manual installation of faasd for development

|

||||

|

||||

> Note: if you're just wanting to try out faasd, then it's likely that you're on the wrong page. This is a detailed set of instructions for those wanting to contribute or customise faasd. Feel free to go back to the homepage and pick a tutorial instead.

|

||||

|

||||

### Pre-reqs

|

||||

|

||||

* Linux

|

||||

|

||||

PC / Cloud - any Linux that containerd works on should be fair game, but faasd is tested with Ubuntu 18.04

|

||||

|

||||

For Raspberry Pi Raspbian Stretch or newer also works fine

|

||||

|

||||

For MacOS users try [multipass.run](https://multipass.run) or [Vagrant](https://www.vagrantup.com/)

|

||||

|

||||

For Windows users, install [Git Bash](https://git-scm.com/downloads) along with multipass or vagrant. You can also use WSL1 or WSL2 which provides a Linux environment.

|

||||

|

||||

You will also need [containerd v1.3.2](https://github.com/containerd/containerd) and the [CNI plugins v0.8.5](https://github.com/containernetworking/plugins)

|

||||

|

||||

[faas-cli](https://github.com/openfaas/faas-cli) is optional, but recommended.

|

||||

|

||||

### Get containerd

|

||||

|

||||

You have three options - binaries for PC, binaries for armhf, or build from source.

|

||||

|

||||

* Install containerd `x86_64` only

|

||||

|

||||

```sh

|

||||

export VER=1.3.2

|

||||

curl -sLSf https://github.com/containerd/containerd/releases/download/v$VER/containerd-$VER.linux-amd64.tar.gz > /tmp/containerd.tar.gz \

|

||||

&& sudo tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

|

||||

containerd -version

|

||||

```

|

||||

|

||||

* Or get my containerd binaries for Raspberry Pi (armhf)

|

||||

|

||||

Building `containerd` on armhf is extremely slow, so I've provided binaries for you.

|

||||

|

||||

```sh

|

||||

curl -sSL https://github.com/alexellis/containerd-armhf/releases/download/v1.3.2/containerd.tgz | sudo tar -xvz --strip-components=2 -C /usr/local/bin/

|

||||

```

|

||||

|

||||

* Or clone / build / install [containerd](https://github.com/containerd/containerd) from source:

|

||||

|

||||

```sh

|

||||

export GOPATH=$HOME/go/

|

||||

mkdir -p $GOPATH/src/github.com/containerd

|

||||

cd $GOPATH/src/github.com/containerd

|

||||

git clone https://github.com/containerd/containerd

|

||||

cd containerd

|

||||

git fetch origin --tags

|

||||

git checkout v1.3.2

|

||||

|

||||

make

|

||||

sudo make install

|

||||

|

||||

containerd --version

|

||||

```

|

||||

|

||||

#### Ensure containerd is running

|

||||

|

||||

```sh

|

||||

curl -sLS https://raw.githubusercontent.com/containerd/containerd/master/containerd.service > /tmp/containerd.service

|

||||

|

||||

sudo cp /tmp/containerd.service /lib/systemd/system/

|

||||

sudo systemctl enable containerd

|

||||

|

||||

sudo systemctl daemon-reload

|

||||

sudo systemctl restart containerd

|

||||

```

|

||||

|

||||

Or run ad-hoc:

|

||||

|

||||

```sh

|

||||

sudo containerd &

|

||||

```

|

||||

|

||||

#### Enable forwarding

|

||||

|

||||

> This is required to allow containers in containerd to access the Internet via your computer's primary network interface.

|

||||

|

||||

```sh

|

||||

sudo /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

```

|

||||

|

||||

Make the setting permanent:

|

||||

|

||||

```sh

|

||||

echo "net.ipv4.conf.all.forwarding=1" | sudo tee -a /etc/sysctl.conf

|

||||

```

|

||||

|

||||

### Hacking (build from source)

|

||||

|

||||

#### Get build packages

|

||||

|

||||

```sh

|

||||

sudo apt update \

|

||||

&& sudo apt install -qy \

|

||||

runc \

|

||||

bridge-utils \

|

||||

make

|

||||

```

|

||||

|

||||

You may find alternative package names for CentOS and other Linux distributions.

|

||||

|

||||

#### Install Go 1.13 (x86_64)

|

||||

|

||||

```sh

|

||||

curl -sSLf https://dl.google.com/go/go1.13.6.linux-amd64.tar.gz > go.tgz

|

||||

sudo rm -rf /usr/local/go/

|

||||

sudo mkdir -p /usr/local/go/

|

||||

sudo tar -xvf go.tgz -C /usr/local/go/ --strip-components=1

|

||||

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

|

||||

go version

|

||||

```

|

||||

|

||||

You should also add the following to `~/.bash_profile`:

|

||||

|

||||

```sh

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

```

|

||||

|

||||

#### Or on Raspberry Pi (armhf)

|

||||

|

||||

```sh

|

||||

curl -SLsf https://dl.google.com/go/go1.13.6.linux-armv6l.tar.gz > go.tgz

|

||||

sudo rm -rf /usr/local/go/

|

||||

sudo mkdir -p /usr/local/go/

|

||||

sudo tar -xvf go.tgz -C /usr/local/go/ --strip-components=1

|

||||

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

|

||||

go version

|

||||

```

|

||||

|

||||

#### Install the CNI plugins:

|

||||

|

||||

* For PC run `export ARCH=amd64`

|

||||

* For RPi/armhf run `export ARCH=arm`

|

||||

* For arm64 run `export ARCH=arm64`

|

||||

|

||||

Then run:

|

||||

|

||||

```sh

|

||||

export ARCH=amd64

|

||||

export CNI_VERSION=v0.8.5

|

||||

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/${CNI_VERSION}/cni-plugins-linux-${ARCH}-${CNI_VERSION}.tgz | sudo tar -xz -C /opt/cni/bin

|

||||

```

|

||||

|

||||

#### Clone faasd and its systemd unit files

|

||||

|

||||

```sh

|

||||

mkdir -p $GOPATH/src/github.com/openfaas/

|

||||

cd $GOPATH/src/github.com/openfaas/

|

||||

git clone https://github.com/openfaas/faasd

|

||||

```

|

||||

|

||||

#### Build `faasd` from source (optional)

|

||||

|

||||

```sh

|

||||

cd $GOPATH/src/github.com/openfaas/faasd

|

||||

cd faasd

|

||||

make local

|

||||

```

|

||||

|

||||

#### Build and run `faasd` (binaries)

|

||||

|

||||

```sh

|

||||

# For x86_64

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# armhf

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd-armhf" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# arm64

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd-arm64" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

```

|

||||

|

||||

#### Install `faasd`

|

||||

|

||||

```sh

|

||||

# Install with systemd

|

||||

sudo cp bin/faasd /usr/local/bin

|

||||

sudo faasd install

|

||||

|

||||

2020/02/17 17:38:06 Writing to: "/var/lib/faasd/secrets/basic-auth-password"

|

||||

2020/02/17 17:38:06 Writing to: "/var/lib/faasd/secrets/basic-auth-user"

|

||||

Login with:

|

||||

sudo cat /var/lib/faasd/secrets/basic-auth-password | faas-cli login -s

|

||||

```

|

||||

|

||||

You can now log in either from this machine or a remote machine using the OpenFaaS UI, or CLI.

|

||||

|

||||

Check that faasd is ready:

|

||||

|

||||

```

|

||||

sudo journalctl -u faasd

|

||||

```

|

||||

|

||||

You should see output like:

|

||||

|

||||

```

|

||||

Feb 17 17:46:35 gold-survive faasd[4140]: 2020/02/17 17:46:35 Starting faasd proxy on 8080

|

||||

Feb 17 17:46:35 gold-survive faasd[4140]: Gateway: 10.62.0.5:8080

|

||||

Feb 17 17:46:35 gold-survive faasd[4140]: 2020/02/17 17:46:35 [proxy] Wait for done

|

||||

Feb 17 17:46:35 gold-survive faasd[4140]: 2020/02/17 17:46:35 [proxy] Begin listen on 8080

|

||||

```

|

||||

|

||||

To get the CLI for the command above run:

|

||||

|

||||

```sh

|

||||

curl -sSLf https://cli.openfaas.com | sudo sh

|

||||

```

|

||||

|

||||

#### Make a change to `faasd`

|

||||

|

||||

There are two components you can hack on:

|

||||

|

||||

For function CRUD you will work on `faasd provider` which is started from `cmd/provider.go`

|

||||

|

||||

For faasd itself, you will work on the code from `faasd up`, which is started from `cmd/up.go`

|

||||

|

||||

Before working on either, stop the systemd services:

|

||||

|

||||

```

|

||||

sudo systemctl stop faasd & # up command

|

||||

sudo systemctl stop faasd-provider # provider command

|

||||

```

|

||||

|

||||

Here is a workflow you can use for each code change:

|

||||

|

||||

Enter the directory of the source code, and build a new binary:

|

||||

|

||||

```bash

|

||||

cd $GOPATH/src/github.com/openfaas/faasd

|

||||

go build

|

||||

```

|

||||

|

||||

Copy that binary to `/usr/local/bin/`

|

||||

|

||||

```bash

|

||||

cp faasd /usr/local/bin/

|

||||

```

|

||||

|

||||

To run `faasd up`, run it from its working directory as root

|

||||

|

||||

```bash

|

||||

sudo -i

|

||||

cd /var/lib/faasd

|

||||

|

||||

faasd up

|

||||

```

|

||||

|

||||

Now to run `faasd provider`, run it from its working directory:

|

||||

|

||||

```bash

|

||||

sudo -i

|

||||

cd /var/lib/faasd-provider

|

||||

|

||||

faasd provider

|

||||

```

|

||||

|

||||

#### At run-time

|

||||

|

||||

Look in `hosts` in the current working folder or in `/var/lib/faasd/` to get the IP for the gateway or Prometheus

|

||||

|

||||

```sh

|

||||

127.0.0.1 localhost

|

||||

10.62.0.1 faasd-provider

|

||||

|

||||

10.62.0.2 prometheus

|

||||

10.62.0.3 gateway

|

||||

10.62.0.4 nats

|

||||

10.62.0.5 queue-worker

|

||||

```

|

||||

|

||||

The IP addresses are dynamic and may change on every launch.

|

||||

|

||||

Since faasd-provider uses containerd heavily it is not running as a container, but as a stand-alone process. Its port is available via the bridge interface, i.e. `openfaas0`

|

||||

|

||||

* Prometheus will run on the Prometheus IP plus port 8080 i.e. http://[prometheus_ip]:9090/targets

|

||||

|

||||

* faasd-provider runs on 10.62.0.1:8081, i.e. directly on the host, and accessible via the bridge interface from CNI.

|

||||

|

||||

* Now go to the gateway's IP address as shown above on port 8080, i.e. http://[gateway_ip]:8080 - you can also use this address to deploy OpenFaaS Functions via the `faas-cli`.

|

||||

|

||||

* basic-auth

|

||||

|

||||

You will then need to get the basic-auth password, it is written to `/var/lib/faasd/secrets/basic-auth-password` if you followed the above instructions.

|

||||

The default Basic Auth username is `admin`, which is written to `/var/lib/faasd/secrets/basic-auth-user`, if you wish to use a non-standard user then create this file and add your username (no newlines or other characters)

|

||||

|

||||

#### Installation with systemd

|

||||

|

||||

* `faasd install` - install faasd and containerd with systemd, this must be run from `$GOPATH/src/github.com/openfaas/faasd`

|

||||

* `journalctl -u faasd -f` - faasd service logs

|

||||

* `journalctl -u faasd-provider -f` - faasd-provider service logs

|

||||

@ -1,6 +1,6 @@

|

||||

[Unit]

|

||||

Description=faasd

|

||||

After=faas-containerd.service

|

||||

After=faasd-provider.service

|

||||

|

||||

[Service]

|

||||

MemoryLimit=500M

|

||||

|

||||

18

main.go

18

main.go

@ -1,9 +1,10 @@

|

||||

package main

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"os"

|

||||

|

||||

"github.com/alexellis/faasd/cmd"

|

||||

"github.com/openfaas/faasd/cmd"

|

||||

)

|

||||

|

||||

// These values will be injected into these variables at the build time.

|

||||

@ -15,6 +16,21 @@ var (

|

||||

)

|

||||

|

||||

func main() {

|

||||

|

||||

if _, ok := os.LookupEnv("CONTAINER_ID"); ok {

|

||||