mirror of

https://github.com/openfaas/faasd.git

synced 2025-06-18 20:16:36 +00:00

Compare commits

42 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| b20e5614c7 | |||

| 40829bbf88 | |||

| 87f49b0289 | |||

| b817479828 | |||

| faae82aa1c | |||

| cddc10acbe | |||

| 1c8e8bb615 | |||

| 6e537d1fde | |||

| c314af4f98 | |||

| 4189cfe52c | |||

| 9e2f571cf7 | |||

| 93825e8354 | |||

| 6752a61a95 | |||

| 16a8d2ac6c | |||

| 68ac4dfecb | |||

| c2480ab30a | |||

| d978c19e23 | |||

| 038b92c5b4 | |||

| f1a1f374d9 | |||

| 24692466d8 | |||

| bdfff4e8c5 | |||

| e3589a4ed1 | |||

| b865e55c85 | |||

| 89a728db16 | |||

| 2237dfd44d | |||

| 4423a5389a | |||

| a6a4502c89 | |||

| 8b86e00128 | |||

| 3039773fbd | |||

| 5b92e7793d | |||

| 88f1aa0433 | |||

| 2b9efd29a0 | |||

| db5312158c | |||

| 26debca616 | |||

| 50de0f34bb | |||

| d64edeb648 | |||

| 42b9cc6b71 | |||

| 25c553a87c | |||

| 8bc39f752e | |||

| cbff6fa8f6 | |||

| 3e29408518 | |||

| 04f1807d92 |

2

.gitattributes

vendored

Normal file

2

.gitattributes

vendored

Normal file

@ -0,0 +1,2 @@

|

||||

vendor/** linguist-generated=true

|

||||

Gopkg.lock linguist-generated=true

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@ -7,3 +7,4 @@ basic-auth-user

|

||||

basic-auth-password

|

||||

/bin

|

||||

/secrets

|

||||

.vscode

|

||||

|

||||

100

Gopkg.lock

generated

100

Gopkg.lock

generated

@ -39,20 +39,35 @@

|

||||

revision = "9e921883ac929bbe515b39793ece99ce3a9d7706"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:74860eb071d52337d67e9ffd6893b29affebd026505aa917ec23131576a91a77"

|

||||

digest = "1:d7086e6a64a9e4fa54aaf56ce42ead0be1300b0285604c4d306438880db946ad"

|

||||

name = "github.com/alexellis/go-execute"

|

||||

packages = ["pkg/v1"]

|

||||

pruneopts = "UT"

|

||||

revision = "961405ea754427780f2151adff607fa740d377f7"

|

||||

version = "0.3.0"

|

||||

revision = "8697e4e28c5e3ce441ff8b2b6073035606af2fe9"

|

||||

version = "0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:6076d857867a70e87dd1994407deb142f27436f1293b13e75cc053192d14eb0c"

|

||||

digest = "1:345f6fa182d72edfa3abc493881c3fa338a464d93b1e2169cda9c822fde31655"

|

||||

name = "github.com/alexellis/k3sup"

|

||||

packages = ["pkg/env"]

|

||||

pruneopts = "UT"

|

||||

revision = "f9a4adddc732742a9ee7962609408fb0999f2d7b"

|

||||

version = "0.7.1"

|

||||

revision = "629c0bc6b50f71ab93a1fbc8971a5bd05dc581eb"

|

||||

version = "0.9.3"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:cda177c07c87c648b1aaa37290717064a86d337a5dc6b317540426872d12de52"

|

||||

name = "github.com/compose-spec/compose-go"

|

||||

packages = [

|

||||

"envfile",

|

||||

"interpolation",

|

||||

"loader",

|

||||

"schema",

|

||||

"template",

|

||||

"types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "36d8ce368e05d2ae83c86b2987f20f7c20d534a6"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cf83a14c8042951b0dcd74758fc32258111ecc7838cbdf5007717172cab9ca9b"

|

||||

@ -226,6 +241,14 @@

|

||||

revision = "54f0238b6bf101fc3ad3b34114cb5520beb562f5"

|

||||

version = "v0.6.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:ade935c55cd6d0367c843b109b09c9d748b1982952031414740750fdf94747eb"

|

||||

name = "github.com/docker/go-connections"

|

||||

packages = ["nat"]

|

||||

pruneopts = "UT"

|

||||

revision = "7395e3f8aa162843a74ed6d48e79627d9792ac55"

|

||||

version = "v0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:0938aba6e09d72d48db029d44dcfa304851f52e2d67cda920436794248e92793"

|

||||

name = "github.com/docker/go-events"

|

||||

@ -291,6 +314,14 @@

|

||||

revision = "00bdffe0f3c77e27d2cf6f5c70232a2d3e4d9c15"

|

||||

version = "v1.7.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:1a7059d684f8972987e4b6f0703083f207d63f63da0ea19610ef2e6bb73db059"

|

||||

name = "github.com/imdario/mergo"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "66f88b4ae75f5edcc556623b96ff32c06360fbb7"

|

||||

version = "v0.3.9"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:870d441fe217b8e689d7949fef6e43efbc787e50f200cb1e70dbca9204a1d6be"

|

||||

name = "github.com/inconshreveable/mousetrap"

|

||||

@ -307,6 +338,22 @@

|

||||

revision = "f55edac94c9bbba5d6182a4be46d86a2c9b5b50e"

|

||||

version = "v1.0.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:528e84b49342ec33c96022f8d7dd4c8bd36881798afbb44e2744bda0ec72299c"

|

||||

name = "github.com/mattn/go-shellwords"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "28e4fdf351f0744b1249317edb45e4c2aa7a5e43"

|

||||

version = "v1.0.10"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:dd34285411cd7599f1fe588ef9451d5237095963ecc85c1212016c6769866306"

|

||||

name = "github.com/mitchellh/mapstructure"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "20e21c67c4d0e1b4244f83449b7cdd10435ee998"

|

||||

version = "v1.3.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:906eb1ca3c8455e447b99a45237b2b9615b665608fd07ad12cce847dd9a1ec43"

|

||||

name = "github.com/morikuni/aec"

|

||||

@ -361,7 +408,7 @@

|

||||

version = "0.18.10"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:7a20be0bdfb2c05a4a7b955cb71645fe2983aa3c0bbae10d6bba3e2dd26ddd0d"

|

||||

digest = "1:4d972c6728f8cbaded7d2ee6349fbe5f9278cabcd51d1ecad97b2e79c72bea9d"

|

||||

name = "github.com/openfaas/faas-provider"

|

||||

packages = [

|

||||

".",

|

||||

@ -372,8 +419,8 @@

|

||||

"types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "8f7c35975e1b2bf8286c2f90ee51633eec427491"

|

||||

version = "0.14.0"

|

||||

revision = "db19209aa27f42a9cf6a23448fc2b8c9cc4fbb5d"

|

||||

version = "v0.15.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cf31692c14422fa27c83a05292eb5cbe0fb2775972e8f1f8446a71549bd8980b"

|

||||

@ -442,6 +489,30 @@

|

||||

pruneopts = "UT"

|

||||

revision = "0a2b9b5464df8343199164a0321edf3313202f7e"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:87fe9bca786484cef53d52adeec7d1c52bc2bfbee75734eddeb75fc5c7023871"

|

||||

name = "github.com/xeipuuv/gojsonpointer"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "02993c407bfbf5f6dae44c4f4b1cf6a39b5fc5bb"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:dc6a6c28ca45d38cfce9f7cb61681ee38c5b99ec1425339bfc1e1a7ba769c807"

|

||||

name = "github.com/xeipuuv/gojsonreference"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "bd5ef7bd5415a7ac448318e64f11a24cd21e594b"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:a8a0ed98532819a3b0dc5cf3264a14e30aba5284b793ba2850d6f381ada5f987"

|

||||

name = "github.com/xeipuuv/gojsonschema"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "82fcdeb203eb6ab2a67d0a623d9c19e5e5a64927"

|

||||

version = "v1.2.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:aed53a5fa03c1270457e331cf8b7e210e3088a2278fec552c5c5d29c1664e161"

|

||||

name = "go.opencensus.io"

|

||||

@ -570,12 +641,22 @@

|

||||

revision = "6eaf6f47437a6b4e2153a190160ef39a92c7eceb"

|

||||

version = "v1.23.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:d7f1bd887dc650737a421b872ca883059580e9f8314d601f88025df4f4802dce"

|

||||

name = "gopkg.in/yaml.v2"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "0b1645d91e851e735d3e23330303ce81f70adbe3"

|

||||

version = "v2.3.0"

|

||||

|

||||

[solve-meta]

|

||||

analyzer-name = "dep"

|

||||

analyzer-version = 1

|

||||

input-imports = [

|

||||

"github.com/alexellis/go-execute/pkg/v1",

|

||||

"github.com/alexellis/k3sup/pkg/env",

|

||||

"github.com/compose-spec/compose-go/loader",

|

||||

"github.com/compose-spec/compose-go/types",

|

||||

"github.com/containerd/containerd",

|

||||

"github.com/containerd/containerd/cio",

|

||||

"github.com/containerd/containerd/containers",

|

||||

@ -601,6 +682,7 @@

|

||||

"github.com/pkg/errors",

|

||||

"github.com/sethvargo/go-password/password",

|

||||

"github.com/spf13/cobra",

|

||||

"github.com/spf13/pflag",

|

||||

"github.com/vishvananda/netlink",

|

||||

"github.com/vishvananda/netns",

|

||||

"golang.org/x/sys/unix",

|

||||

|

||||

@ -16,11 +16,11 @@

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/alexellis/k3sup"

|

||||

version = "0.7.1"

|

||||

version = "0.9.3"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/alexellis/go-execute"

|

||||

version = "0.3.0"

|

||||

version = "0.4.0"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/gorilla/mux"

|

||||

@ -40,7 +40,7 @@

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/openfaas/faas-provider"

|

||||

version = "0.14.0"

|

||||

version = "v0.15.1"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/docker/cli"

|

||||

|

||||

4

LICENSE

4

LICENSE

@ -1,6 +1,8 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2019 Alex Ellis

|

||||

Copyright (c) 2020 Alex Ellis

|

||||

Copyright (c) 2020 OpenFaaS Ltd

|

||||

Copyright (c) 2020 OpenFaas Author(s)

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

|

||||

6

Makefile

6

Makefile

@ -1,8 +1,8 @@

|

||||

Version := $(shell git describe --tags --dirty)

|

||||

GitCommit := $(shell git rev-parse HEAD)

|

||||

LDFLAGS := "-s -w -X main.Version=$(Version) -X main.GitCommit=$(GitCommit)"

|

||||

CONTAINERD_VER := 1.3.2

|

||||

CNI_VERSION := v0.8.5

|

||||

CONTAINERD_VER := 1.3.4

|

||||

CNI_VERSION := v0.8.6

|

||||

ARCH := amd64

|

||||

|

||||

.PHONY: all

|

||||

@ -36,7 +36,7 @@ prepare-test:

|

||||

sudo systemctl status -l faasd-provider --no-pager

|

||||

sudo systemctl status -l faasd --no-pager

|

||||

curl -sSLf https://cli.openfaas.com | sudo sh

|

||||

sleep 120 && sudo journalctl -u faasd --no-pager

|

||||

echo "Sleeping for 2m" && sleep 120 && sudo journalctl -u faasd --no-pager

|

||||

|

||||

.PHONY: test-e2e

|

||||

test-e2e:

|

||||

|

||||

161

README.md

161

README.md

@ -1,39 +1,35 @@

|

||||

# faasd - serverless with containerd and CNI 🐳

|

||||

# faasd - Serverless for everyone else

|

||||

|

||||

faasd is built for everyone else, for those who have no desire to manage expensive infrastructure.

|

||||

|

||||

[](https://travis-ci.com/openfaas/faasd)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://www.openfaas.com)

|

||||

|

||||

|

||||

faasd is the same OpenFaaS experience and ecosystem, but without Kubernetes. Functions and microservices can be deployed anywhere with reduced overheads whilst retaining the portability of containers and cloud-native tooling.

|

||||

faasd is [OpenFaaS](https://github.com/openfaas/) reimagined, but without the cost and complexity of Kubernetes. It runs on a single host with very modest requirements, making it fast and easy to manage. Under the hood it uses [containerd](https://containerd.io/) and [Container Networking Interface (CNI)](https://github.com/containernetworking/cni) along with the same core OpenFaaS components from the main project.

|

||||

|

||||

## When should you use faasd over OpenFaaS on Kubernetes?

|

||||

|

||||

* You have a cost sensitive project - run faasd on a 5-10 USD VPS or on your Raspberry Pi

|

||||

* When you just need a few functions or microservices, without the cost of a cluster

|

||||

* When you don't have the bandwidth to learn or manage Kubernetes

|

||||

* To deploy embedded apps in IoT and edge use-cases

|

||||

* To shrink-wrap applications for use with a customer or client

|

||||

|

||||

faasd does not create the same maintenance burden you'll find with maintaining, upgrading, and securing a Kubernetes cluster. You can deploy it and walk away, in the worst case, just deploy a new VM and deploy your functions again.

|

||||

|

||||

## About faasd

|

||||

|

||||

* is a single Golang binary

|

||||

* can be set-up and left alone to run your applications

|

||||

* is multi-arch, so works on Intel `x86_64` and ARM out the box

|

||||

* uses the same core components and ecosystem of OpenFaaS

|

||||

* is multi-arch, so works on Intel `x86_64` and ARM out the box

|

||||

* can be set-up and left alone to run your applications

|

||||

|

||||

|

||||

|

||||

> Demo of faasd running in KVM

|

||||

|

||||

## What does faasd deploy?

|

||||

|

||||

* faasd - itself, and its [faas-provider](https://github.com/openfaas/faas-provider) for containerd - CRUD for functions and services, implements the OpenFaaS REST API

|

||||

* [Prometheus](https://github.com/prometheus/prometheus) - for monitoring of services, metrics, scaling and dashboards

|

||||

* [OpenFaaS Gateway](https://github.com/openfaas/faas/tree/master/gateway) - the UI portal, CLI, and other OpenFaaS tooling can talk to this.

|

||||

* [OpenFaaS queue-worker for NATS](https://github.com/openfaas/nats-queue-worker) - run your invocations in the background without adding any code. See also: [asynchronous invocations](https://docs.openfaas.com/reference/triggers/#async-nats-streaming)

|

||||

* [NATS](https://nats.io) for asynchronous processing and queues

|

||||

|

||||

You'll also need:

|

||||

|

||||

* [CNI](https://github.com/containernetworking/plugins)

|

||||

* [containerd](https://github.com/containerd/containerd)

|

||||

* [runc](https://github.com/opencontainers/runc)

|

||||

|

||||

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) along with pre-packaged functions from *the Function Store*, or build your own using any OpenFaaS template.

|

||||

|

||||

## Tutorials

|

||||

|

||||

### Get started on DigitalOcean, or any other IaaS

|

||||

@ -42,9 +38,9 @@ If your IaaS supports `user_data` aka "cloud-init", then this guide is for you.

|

||||

|

||||

* [Build a Serverless appliance with faasd](https://blog.alexellis.io/deploy-serverless-faasd-with-cloud-init/)

|

||||

|

||||

### Run locally on MacOS, Linux, or Windows with Multipass.run

|

||||

### Run locally on MacOS, Linux, or Windows with multipass

|

||||

|

||||

* [Get up and running with your own faasd installation on your Mac/Ubuntu or Windows with cloud-config](https://gist.github.com/alexellis/6d297e678c9243d326c151028a3ad7b9)

|

||||

* [Get up and running with your own faasd installation on your Mac/Ubuntu or Windows with cloud-config](/docs/MULTIPASS.md)

|

||||

|

||||

### Get started on armhf / Raspberry Pi

|

||||

|

||||

@ -56,7 +52,11 @@ You can run this tutorial on your Raspberry Pi, or adapt the steps for a regular

|

||||

|

||||

Automate everything within < 60 seconds and get a public URL and IP address back. Customise as required, or adapt to your preferred cloud such as AWS EC2.

|

||||

|

||||

* [Provision faasd 0.7.5 on DigitalOcean with Terraform 0.12.0](https://gist.github.com/alexellis/fd618bd2f957eb08c44d086ef2fc3906)

|

||||

* [Provision faasd 0.8.1 on DigitalOcean with Terraform 0.12.0](docs/bootstrap/README.md)

|

||||

|

||||

* [Provision faasd on DigitalOcean with built-in TLS support](docs/bootstrap/digitalocean-terraform/README.md)

|

||||

|

||||

## Operational concerns

|

||||

|

||||

### A note on private repos / registries

|

||||

|

||||

@ -89,6 +89,81 @@ journalctl -t openfaas-fn:figlet -f &

|

||||

echo logs | faas-cli invoke figlet

|

||||

```

|

||||

|

||||

### Logs for the core services

|

||||

|

||||

Core services as defined in the docker-compose.yaml file are deployed as containers by faasd.

|

||||

|

||||

View the logs for a component by giving its NAME:

|

||||

|

||||

```bash

|

||||

journalctl -t default:NAME

|

||||

|

||||

journalctl -t default:gateway

|

||||

|

||||

journalctl -t default:queue-worker

|

||||

```

|

||||

|

||||

You can also use `-f` to follow the logs, or `--lines` to tail a number of lines, or `--since` to give a timeframe.

|

||||

|

||||

### Exposing core services

|

||||

|

||||

The OpenFaaS stack is made up of several core services including NATS and Prometheus. You can expose these through the `docker-compose.yaml` file located at `/var/lib/faasd`.

|

||||

|

||||

Expose the gateway to all adapters:

|

||||

|

||||

```yaml

|

||||

gateway:

|

||||

ports:

|

||||

- "8080:8080"

|

||||

```

|

||||

|

||||

Expose Prometheus only to 127.0.0.1:

|

||||

|

||||

```yaml

|

||||

prometheus:

|

||||

ports:

|

||||

- "127.0.0.1:9090:9090"

|

||||

```

|

||||

|

||||

### Upgrading faasd

|

||||

|

||||

To upgrade `faasd` either re-create your VM using Terraform, or simply replace the faasd binary with a newer one.

|

||||

|

||||

```bash

|

||||

systemctl stop faasd-provider

|

||||

systemctl stop faasd

|

||||

|

||||

# Replace /usr/local/bin/faasd with the desired release

|

||||

|

||||

# Replace /var/lib/faasd/docker-compose.yaml with the matching version for

|

||||

# that release.

|

||||

# Remember to keep any custom patches you make such as exposing additional

|

||||

# ports, or updating timeout values

|

||||

|

||||

systemctl start faasd

|

||||

systemctl start faasd-provider

|

||||

```

|

||||

|

||||

You could also perform this task over SSH, or use a configuration management tool.

|

||||

|

||||

> Note: if you are using Caddy or Let's Encrypt for free SSL certificates, that you may hit rate-limits for generating new certificates if you do this too often within a given week.

|

||||

|

||||

## What does faasd deploy?

|

||||

|

||||

* faasd - itself, and its [faas-provider](https://github.com/openfaas/faas-provider) for containerd - CRUD for functions and services, implements the OpenFaaS REST API

|

||||

* [Prometheus](https://github.com/prometheus/prometheus) - for monitoring of services, metrics, scaling and dashboards

|

||||

* [OpenFaaS Gateway](https://github.com/openfaas/faas/tree/master/gateway) - the UI portal, CLI, and other OpenFaaS tooling can talk to this.

|

||||

* [OpenFaaS queue-worker for NATS](https://github.com/openfaas/nats-queue-worker) - run your invocations in the background without adding any code. See also: [asynchronous invocations](https://docs.openfaas.com/reference/triggers/#async-nats-streaming)

|

||||

* [NATS](https://nats.io) for asynchronous processing and queues

|

||||

|

||||

You'll also need:

|

||||

|

||||

* [CNI](https://github.com/containernetworking/plugins)

|

||||

* [containerd](https://github.com/containerd/containerd)

|

||||

* [runc](https://github.com/opencontainers/runc)

|

||||

|

||||

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) along with pre-packaged functions from *the Function Store*, or build your own using any OpenFaaS template.

|

||||

|

||||

### Manual / developer instructions

|

||||

|

||||

See [here for manual / developer instructions](docs/DEV.md)

|

||||

@ -105,9 +180,17 @@ For community functions see `faas-cli store --help`

|

||||

|

||||

For templates built by the community see: `faas-cli template store list`, you can also use the `dockerfile` template if you just want to migrate an existing service without the benefits of using a template.

|

||||

|

||||

### Workshop

|

||||

### Training and courses

|

||||

|

||||

[The OpenFaaS workshop](https://github.com/openfaas/workshop/) is a set of 12 self-paced labs and provides a great starting point

|

||||

#### LinuxFoundation training course

|

||||

|

||||

The founder of faasd and OpenFaaS has written a training course for the LinuxFoundation which also covers how to use OpenFaaS on Kubernetes. Much of the same concepts can be applied to faasd, and the course is free:

|

||||

|

||||

* [Introduction to Serverless on Kubernetes](https://www.edx.org/course/introduction-to-serverless-on-kubernetes)

|

||||

|

||||

#### Community workshop

|

||||

|

||||

[The OpenFaaS workshop](https://github.com/openfaas/workshop/) is a set of 12 self-paced labs and provides a great starting point for learning the features of openfaas. Not all features will be available or usable with faasd.

|

||||

|

||||

### Community support

|

||||

|

||||

@ -115,7 +198,7 @@ An active community of almost 3000 users awaits you on Slack. Over 250 of those

|

||||

|

||||

* [Join Slack](https://slack.openfaas.io/)

|

||||

|

||||

## Backlog

|

||||

## Roadmap

|

||||

|

||||

### Supported operations

|

||||

|

||||

@ -139,19 +222,26 @@ Other operations are pending development in the provider such as:

|

||||

|

||||

* `faas auth` - supported for Basic Authentication, but OAuth2 & OIDC require a patch

|

||||

|

||||

## Todo

|

||||

### Backlog

|

||||

|

||||

Pending:

|

||||

|

||||

* [ ] Add support for using container images in third-party public registries

|

||||

* [ ] Add support for using container images in private third-party registries

|

||||

* [ ] [Store and retrieve annotations in function spec](https://github.com/openfaas/faasd/pull/86) - in progress

|

||||

* [ ] Offer live rolling-updates, with zero downtime - requires moving to IDs vs. names for function containers

|

||||

* [ ] An installer for faasd and dependencies - runc, containerd

|

||||

* [ ] Monitor and restart any of the core components at runtime if the container stops

|

||||

* [ ] Bundle/package/automate installation of containerd - [see bootstrap from k3s](https://github.com/rancher/k3s)

|

||||

* [ ] Provide ufw rules / example for blocking access to everything but a reverse proxy to the gateway container

|

||||

* [ ] Provide [simple Caddyfile example](https://blog.alexellis.io/https-inlets-local-endpoints/) in the README showing how to expose the faasd proxy on port 80/443 with TLS

|

||||

|

||||

Done:

|

||||

### Known-issues

|

||||

|

||||

* [ ] [containerd can't pull image from Github Docker Package Registry](https://github.com/containerd/containerd/issues/3291)

|

||||

|

||||

### Completed

|

||||

|

||||

* [x] Provide a cloud-init configuration for faasd bootstrap

|

||||

* [x] Configure core services from a docker-compose.yaml file

|

||||

* [x] Store and fetch logs from the journal

|

||||

* [x] Add support for using container images in third-party public registries

|

||||

* [x] Add support for using container images in private third-party registries

|

||||

* [x] Provide a cloud-config.txt file for automated deployments of `faasd`

|

||||

* [x] Inject / manage IPs between core components for service to service communication - i.e. so Prometheus can scrape the OpenFaaS gateway - done via `/etc/hosts` mount

|

||||

* [x] Add queue-worker and NATS

|

||||

@ -163,4 +253,9 @@ Done:

|

||||

* [x] Configure `basic_auth` to protect the OpenFaaS gateway and faasd-provider HTTP API

|

||||

* [x] Setup custom working directory for faasd `/var/lib/faasd/`

|

||||

* [x] Use CNI to create network namespaces and adapters

|

||||

* [x] Optionally expose core services from the docker-compose.yaml file, locally or to all adapters.

|

||||

|

||||

WIP:

|

||||

|

||||

* [ ] Annotation support (PR ready)

|

||||

* [ ] Hard memory limits for functions (PR ready)

|

||||

|

||||

@ -1,5 +1,6 @@

|

||||

#cloud-config

|

||||

ssh_authorized_keys:

|

||||

## Note: Replace with your own public key

|

||||

- ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC8Q/aUYUr3P1XKVucnO9mlWxOjJm+K01lHJR90MkHC9zbfTqlp8P7C3J26zKAuzHXOeF+VFxETRr6YedQKW9zp5oP7sN+F2gr/pO7GV3VmOqHMV7uKfyUQfq7H1aVzLfCcI7FwN2Zekv3yB7kj35pbsMa1Za58aF6oHRctZU6UWgXXbRxP+B04DoVU7jTstQ4GMoOCaqYhgPHyjEAS3DW0kkPW6HzsvJHkxvVcVlZ/wNJa1Ie/yGpzOzWIN0Ol0t2QT/RSWOhfzO1A2P0XbPuZ04NmriBonO9zR7T1fMNmmtTuK7WazKjQT3inmYRAqU6pe8wfX8WIWNV7OowUjUsv alex@alexr.local

|

||||

|

||||

package_update: true

|

||||

@ -8,21 +9,21 @@ packages:

|

||||

- runc

|

||||

|

||||

runcmd:

|

||||

- curl -sLSf https://github.com/containerd/containerd/releases/download/v1.3.2/containerd-1.3.2.linux-amd64.tar.gz > /tmp/containerd.tar.gz && tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

- curl -SLfs https://raw.githubusercontent.com/containerd/containerd/v1.3.2/containerd.service | tee /etc/systemd/system/containerd.service

|

||||

- curl -sLSf https://github.com/containerd/containerd/releases/download/v1.3.5/containerd-1.3.5-linux-amd64.tar.gz > /tmp/containerd.tar.gz && tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

- curl -SLfs https://raw.githubusercontent.com/containerd/containerd/v1.3.5/containerd.service | tee /etc/systemd/system/containerd.service

|

||||

- systemctl daemon-reload && systemctl start containerd

|

||||

- systemctl enable containerd

|

||||

- /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

- mkdir -p /opt/cni/bin

|

||||

- curl -sSL https://github.com/containernetworking/plugins/releases/download/v0.8.5/cni-plugins-linux-amd64-v0.8.5.tgz | tar -xz -C /opt/cni/bin

|

||||

- mkdir -p /go/src/github.com/openfaas/

|

||||

- cd /go/src/github.com/openfaas/ && git clone https://github.com/openfaas/faasd

|

||||

- curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.1/faasd" --output "/usr/local/bin/faasd" && chmod a+x "/usr/local/bin/faasd"

|

||||

- cd /go/src/github.com/openfaas/ && git clone https://github.com/openfaas/faasd && git checkout 0.9.2

|

||||

- curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.9.2/faasd" --output "/usr/local/bin/faasd" && chmod a+x "/usr/local/bin/faasd"

|

||||

- cd /go/src/github.com/openfaas/faasd/ && /usr/local/bin/faasd install

|

||||

- systemctl status -l containerd --no-pager

|

||||

- journalctl -u faasd-provider --no-pager

|

||||

- systemctl status -l faasd-provider --no-pager

|

||||

- systemctl status -l faasd --no-pager

|

||||

- curl -sSLf https://cli.openfaas.com | sh

|

||||

- sleep 5 && journalctl -u faasd --no-pager

|

||||

- sleep 60 && journalctl -u faasd --no-pager

|

||||

- cat /var/lib/faasd/secrets/basic-auth-password | /usr/local/bin/faas-cli login --password-stdin

|

||||

|

||||

@ -38,6 +38,10 @@ func runInstall(_ *cobra.Command, _ []string) error {

|

||||

return errors.Wrap(basicAuthErr, "cannot create basic-auth-* files")

|

||||

}

|

||||

|

||||

if err := cp("docker-compose.yaml", faasdwd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if err := cp("prometheus.yml", faasdwd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

@ -47,6 +47,7 @@ func makeProviderCmd() *cobra.Command {

|

||||

}

|

||||

|

||||

log.Printf("faasd-provider starting..\tService Timeout: %s\n", config.WriteTimeout.String())

|

||||

printVersion()

|

||||

|

||||

wd, err := os.Getwd()

|

||||

if err != nil {

|

||||

|

||||

10

cmd/root.go

10

cmd/root.go

@ -69,11 +69,11 @@ var versionCmd = &cobra.Command{

|

||||

func parseBaseCommand(_ *cobra.Command, _ []string) {

|

||||

printLogo()

|

||||

|

||||

fmt.Printf(

|

||||

`faasd

|

||||

Commit: %s

|

||||

Version: %s

|

||||

`, GitCommit, GetVersion())

|

||||

printVersion()

|

||||

}

|

||||

|

||||

func printVersion() {

|

||||

fmt.Printf("faasd version: %s\tcommit: %s\n", GetVersion(), GitCommit)

|

||||

}

|

||||

|

||||

func printLogo() {

|

||||

|

||||

248

cmd/up.go

248

cmd/up.go

@ -7,54 +7,61 @@ import (

|

||||

"os"

|

||||

"os/signal"

|

||||

"path"

|

||||

"strings"

|

||||

"sync"

|

||||

"syscall"

|

||||

"time"

|

||||

|

||||

"github.com/pkg/errors"

|

||||

|

||||

"github.com/alexellis/k3sup/pkg/env"

|

||||

"github.com/openfaas/faasd/pkg"

|

||||

"github.com/sethvargo/go-password/password"

|

||||

"github.com/spf13/cobra"

|

||||

flag "github.com/spf13/pflag"

|

||||

|

||||

"github.com/openfaas/faasd/pkg"

|

||||

)

|

||||

|

||||

// upConfig are the CLI flags used by the `faasd up` command to deploy the faasd service

|

||||

type upConfig struct {

|

||||

// composeFilePath is the path to the compose file specifying the faasd service configuration

|

||||

// See https://compose-spec.io/ for more information about the spec,

|

||||

//

|

||||

// currently, this must be the name of a file in workingDir, which is set to the value of

|

||||

// `faasdwd = /var/lib/faasd`

|

||||

composeFilePath string

|

||||

|

||||

// working directory to assume the compose file is in, should be faasdwd.

|

||||

// this is not configurable but may be in the future.

|

||||

workingDir string

|

||||

}

|

||||

|

||||

func init() {

|

||||

configureUpFlags(upCmd.Flags())

|

||||

}

|

||||

|

||||

var upCmd = &cobra.Command{

|

||||

Use: "up",

|

||||

Short: "Start faasd",

|

||||

RunE: runUp,

|

||||

}

|

||||

|

||||

const containerSecretMountDir = "/run/secrets"

|

||||

func runUp(cmd *cobra.Command, _ []string) error {

|

||||

|

||||

func runUp(_ *cobra.Command, _ []string) error {

|

||||

printVersion()

|

||||

|

||||

clientArch, clientOS := env.GetClientArch()

|

||||

|

||||

if clientOS != "Linux" {

|

||||

return fmt.Errorf("You can only use faasd on Linux")

|

||||

}

|

||||

clientSuffix := ""

|

||||

switch clientArch {

|

||||

case "x86_64":

|

||||

clientSuffix = ""

|

||||

break

|

||||

case "armhf":

|

||||

case "armv7l":

|

||||

clientSuffix = "-armhf"

|

||||

break

|

||||

case "arm64":

|

||||

case "aarch64":

|

||||

clientSuffix = "-arm64"

|

||||

cfg, err := parseUpFlags(cmd)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if basicAuthErr := makeBasicAuthFiles(path.Join(path.Join(faasdwd, "secrets"))); basicAuthErr != nil {

|

||||

services, err := loadServiceDefinition(cfg)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

basicAuthErr := makeBasicAuthFiles(path.Join(cfg.workingDir, "secrets"))

|

||||

if basicAuthErr != nil {

|

||||

return errors.Wrap(basicAuthErr, "cannot create basic-auth-* files")

|

||||

}

|

||||

|

||||

services := makeServiceDefinitions(clientSuffix)

|

||||

|

||||

start := time.Now()

|

||||

supervisor, err := pkg.NewSupervisor("/run/containerd/containerd.sock")

|

||||

if err != nil {

|

||||

@ -64,20 +71,15 @@ func runUp(_ *cobra.Command, _ []string) error {

|

||||

log.Printf("Supervisor created in: %s\n", time.Since(start).String())

|

||||

|

||||

start = time.Now()

|

||||

|

||||

err = supervisor.Start(services)

|

||||

|

||||

if err != nil {

|

||||

if err := supervisor.Start(services); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

defer supervisor.Close()

|

||||

|

||||

log.Printf("Supervisor init done in: %s\n", time.Since(start).String())

|

||||

|

||||

shutdownTimeout := time.Second * 1

|

||||

timeout := time.Second * 60

|

||||

proxyDoneCh := make(chan bool)

|

||||

|

||||

wg := sync.WaitGroup{}

|

||||

wg.Add(1)

|

||||

@ -94,40 +96,38 @@ func runUp(_ *cobra.Command, _ []string) error {

|

||||

fmt.Println(err)

|

||||

}

|

||||

|

||||

// Close proxy

|

||||

proxyDoneCh <- true

|

||||

// TODO: close proxies

|

||||

time.AfterFunc(shutdownTimeout, func() {

|

||||

wg.Done()

|

||||

})

|

||||

}()

|

||||

|

||||

gatewayURLChan := make(chan string, 1)

|

||||

proxyPort := 8080

|

||||

proxy := pkg.NewProxy(proxyPort, timeout)

|

||||

go proxy.Start(gatewayURLChan, proxyDoneCh)

|

||||

localResolver := pkg.NewLocalResolver(path.Join(cfg.workingDir, "hosts"))

|

||||

go localResolver.Start()

|

||||

|

||||

go func() {

|

||||

wd, _ := os.Getwd()

|

||||

proxies := map[uint32]*pkg.Proxy{}

|

||||

for _, svc := range services {

|

||||

for _, port := range svc.Ports {

|

||||

|

||||

time.Sleep(3 * time.Second)

|

||||

|

||||

fileData, fileErr := ioutil.ReadFile(path.Join(wd, "hosts"))

|

||||

if fileErr != nil {

|

||||

log.Println(fileErr)

|

||||

return

|

||||

}

|

||||

|

||||

host := ""

|

||||

lines := strings.Split(string(fileData), "\n")

|

||||

for _, line := range lines {

|

||||

if strings.Index(line, "gateway") > -1 {

|

||||

host = line[:strings.Index(line, "\t")]

|

||||

listenPort := port.Port

|

||||

if _, ok := proxies[listenPort]; ok {

|

||||

return fmt.Errorf("port %d already allocated", listenPort)

|

||||

}

|

||||

|

||||

hostIP := "0.0.0.0"

|

||||

if len(port.HostIP) > 0 {

|

||||

hostIP = port.HostIP

|

||||

}

|

||||

|

||||

upstream := fmt.Sprintf("%s:%d", svc.Name, port.TargetPort)

|

||||

proxies[listenPort] = pkg.NewProxy(upstream, listenPort, hostIP, timeout, localResolver)

|

||||

}

|

||||

log.Printf("[up] Sending %s to proxy\n", host)

|

||||

gatewayURLChan <- host + ":8080"

|

||||

close(gatewayURLChan)

|

||||

}()

|

||||

}

|

||||

|

||||

// TODO: track proxies for later cancellation when receiving sigint/term

|

||||

for _, v := range proxies {

|

||||

go v.Start()

|

||||

}

|

||||

|

||||

wg.Wait()

|

||||

return nil

|

||||

@ -135,7 +135,7 @@ func runUp(_ *cobra.Command, _ []string) error {

|

||||

|

||||

func makeBasicAuthFiles(wd string) error {

|

||||

|

||||

pwdFile := wd + "/basic-auth-password"

|

||||

pwdFile := path.Join(wd, "basic-auth-password")

|

||||

authPassword, err := password.Generate(63, 10, 0, false, true)

|

||||

|

||||

if err != nil {

|

||||

@ -147,7 +147,7 @@ func makeBasicAuthFiles(wd string) error {

|

||||

return err

|

||||

}

|

||||

|

||||

userFile := wd + "/basic-auth-user"

|

||||

userFile := path.Join(wd, "basic-auth-user")

|

||||

err = makeFile(userFile, "admin")

|

||||

if err != nil {

|

||||

return err

|

||||

@ -156,6 +156,8 @@ func makeBasicAuthFiles(wd string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

// makeFile will create a file with the specified content if it does not exist yet.

|

||||

// if the file already exists, the method is a noop.

|

||||

func makeFile(filePath, fileContents string) error {

|

||||

_, err := os.Stat(filePath)

|

||||

if err == nil {

|

||||

@ -169,105 +171,35 @@ func makeFile(filePath, fileContents string) error {

|

||||

}

|

||||

}

|

||||

|

||||

func makeServiceDefinitions(archSuffix string) []pkg.Service {

|

||||

wd, _ := os.Getwd()

|

||||

// load the docker compose file and then parse it as supervisor Services

|

||||

// the logic for loading the compose file comes from the compose reference implementation

|

||||

// https://github.com/compose-spec/compose-ref/blob/master/compose-ref.go#L353

|

||||

func loadServiceDefinition(cfg upConfig) ([]pkg.Service, error) {

|

||||

|

||||

return []pkg.Service{

|

||||

{

|

||||

Name: "basic-auth-plugin",

|

||||

Image: "docker.io/openfaas/basic-auth-plugin:0.18.10" + archSuffix,

|

||||

Env: []string{

|

||||

"port=8080",

|

||||

"secret_mount_path=" + containerSecretMountDir,

|

||||

"user_filename=basic-auth-user",

|

||||

"pass_filename=basic-auth-password",

|

||||

},

|

||||

Mounts: []pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

Args: nil,

|

||||

},

|

||||

{

|

||||

Name: "nats",

|

||||

Env: []string{""},

|

||||

Image: "docker.io/library/nats-streaming:0.11.2",

|

||||

Caps: []string{},

|

||||

Args: []string{"/nats-streaming-server", "-m", "8222", "--store=memory", "--cluster_id=faas-cluster"},

|

||||

},

|

||||

{

|

||||

Name: "prometheus",

|

||||

Env: []string{},

|

||||

Image: "docker.io/prom/prometheus:v2.14.0",

|

||||

Mounts: []pkg.Mount{

|

||||

{

|

||||

Src: path.Join(wd, "prometheus.yml"),

|

||||

Dest: "/etc/prometheus/prometheus.yml",

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

},

|

||||

{

|

||||

Name: "gateway",

|

||||

Env: []string{

|

||||

"basic_auth=true",

|

||||

"functions_provider_url=http://faasd-provider:8081/",

|

||||

"direct_functions=false",

|

||||

"read_timeout=60s",

|

||||

"write_timeout=60s",

|

||||

"upstream_timeout=65s",

|

||||

"faas_nats_address=nats",

|

||||

"faas_nats_port=4222",

|

||||

"auth_proxy_url=http://basic-auth-plugin:8080/validate",

|

||||

"auth_proxy_pass_body=false",

|

||||

"secret_mount_path=" + containerSecretMountDir,

|

||||

"scale_from_zero=true",

|

||||

},

|

||||

Image: "docker.io/openfaas/gateway:0.18.8" + archSuffix,

|

||||

Mounts: []pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

},

|

||||

{

|

||||

Name: "queue-worker",

|

||||

Env: []string{

|

||||

"faas_nats_address=nats",

|

||||

"faas_nats_port=4222",

|

||||

"gateway_invoke=true",

|

||||

"faas_gateway_address=gateway",

|

||||

"ack_wait=5m5s",

|

||||

"max_inflight=1",

|

||||

"write_debug=false",

|

||||

"basic_auth=true",

|

||||

"secret_mount_path=" + containerSecretMountDir,

|

||||

},

|

||||

Image: "docker.io/openfaas/queue-worker:0.9.0",

|

||||

Mounts: []pkg.Mount{

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-password"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-password"),

|

||||

},

|

||||

{

|

||||

Src: path.Join(path.Join(wd, "secrets"), "basic-auth-user"),

|

||||

Dest: path.Join(containerSecretMountDir, "basic-auth-user"),

|

||||

},

|

||||

},

|

||||

Caps: []string{"CAP_NET_RAW"},

|

||||

},

|

||||

serviceConfig, err := pkg.LoadComposeFile(cfg.workingDir, cfg.composeFilePath)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

return pkg.ParseCompose(serviceConfig)

|

||||

}

|

||||

|

||||

// ConfigureUpFlags will define the flags for the `faasd up` command. The flag struct, configure, and

|

||||

// parse are split like this to simplify testability.

|

||||

func configureUpFlags(flags *flag.FlagSet) {

|

||||

flags.StringP("file", "f", "docker-compose.yaml", "compose file specifying the faasd service configuration")

|

||||

}

|

||||

|

||||

// ParseUpFlags will load the flag values into an upFlags object. Errors will be underlying

|

||||

// Get errors from the pflag library.

|

||||

func parseUpFlags(cmd *cobra.Command) (upConfig, error) {

|

||||

parsed := upConfig{}

|

||||

path, err := cmd.Flags().GetString("file")

|

||||

if err != nil {

|

||||

return parsed, errors.Wrap(err, "can not parse compose file path flag")

|

||||

}

|

||||

|

||||

parsed.composeFilePath = path

|

||||

parsed.workingDir = faasdwd

|

||||

return parsed, err

|

||||

}

|

||||

|

||||

98

docker-compose.yaml

Normal file

98

docker-compose.yaml

Normal file

@ -0,0 +1,98 @@

|

||||

version: "3.7"

|

||||

services:

|

||||

basic-auth-plugin:

|

||||

image: "docker.io/openfaas/basic-auth-plugin:0.18.18${ARCH_SUFFIX}"

|

||||

environment:

|

||||

- port=8080

|

||||

- secret_mount_path=/run/secrets

|

||||

- user_filename=basic-auth-user

|

||||

- pass_filename=basic-auth-password

|

||||

volumes:

|

||||

# we assume cwd == /var/lib/faasd

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-password

|

||||

target: /run/secrets/basic-auth-password

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-user

|

||||

target: /run/secrets/basic-auth-user

|

||||

cap_add:

|

||||

- CAP_NET_RAW

|

||||

|

||||

nats:

|

||||

image: docker.io/library/nats-streaming:0.11.2

|

||||

command:

|

||||

- "/nats-streaming-server"

|

||||

- "-m"

|

||||

- "8222"

|

||||

- "--store=memory"

|

||||

- "--cluster_id=faas-cluster"

|

||||

# ports:

|

||||

# - "127.0.0.1:8222:8222"

|

||||

|

||||

prometheus:

|

||||

image: docker.io/prom/prometheus:v2.14.0

|

||||

volumes:

|

||||

- type: bind

|

||||

source: ./prometheus.yml

|

||||

target: /etc/prometheus/prometheus.yml

|

||||

cap_add:

|

||||

- CAP_NET_RAW

|

||||

ports:

|

||||

- "127.0.0.1:9090:9090"

|

||||

|

||||

gateway:

|

||||

image: "docker.io/openfaas/gateway:0.18.18${ARCH_SUFFIX}"

|

||||

environment:

|

||||

- basic_auth=true

|

||||

- functions_provider_url=http://faasd-provider:8081/

|

||||

- direct_functions=false

|

||||

- read_timeout=60s

|

||||

- write_timeout=60s

|

||||

- upstream_timeout=65s

|

||||

- faas_nats_address=nats

|

||||

- faas_nats_port=4222

|

||||

- auth_proxy_url=http://basic-auth-plugin:8080/validate

|

||||

- auth_proxy_pass_body=false

|

||||

- secret_mount_path=/run/secrets

|

||||

- scale_from_zero=true

|

||||

volumes:

|

||||

# we assume cwd == /var/lib/faasd

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-password

|

||||

target: /run/secrets/basic-auth-password

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-user

|

||||

target: /run/secrets/basic-auth-user

|

||||

cap_add:

|

||||

- CAP_NET_RAW

|

||||

depends_on:

|

||||

- basic-auth-plugin

|

||||

- nats

|

||||

- prometheus

|

||||

ports:

|

||||

- "8080:8080"

|

||||

|

||||

queue-worker:

|

||||

image: docker.io/openfaas/queue-worker:0.11.2

|

||||

environment:

|

||||

- faas_nats_address=nats

|

||||

- faas_nats_port=4222

|

||||

- gateway_invoke=true

|

||||

- faas_gateway_address=gateway

|

||||

- ack_wait=5m5s

|

||||

- max_inflight=1

|

||||

- write_debug=false

|

||||

- basic_auth=true

|

||||

- secret_mount_path=/run/secrets

|

||||

volumes:

|

||||

# we assume cwd == /var/lib/faasd

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-password

|

||||

target: /run/secrets/basic-auth-password

|

||||

- type: bind

|

||||

source: ./secrets/basic-auth-user

|

||||

target: /run/secrets/basic-auth-user

|

||||

cap_add:

|

||||

- CAP_NET_RAW

|

||||

depends_on:

|

||||

- nats

|

||||

126

docs/DEV.md

126

docs/DEV.md

@ -14,10 +14,67 @@

|

||||

|

||||

For Windows users, install [Git Bash](https://git-scm.com/downloads) along with multipass or vagrant. You can also use WSL1 or WSL2 which provides a Linux environment.

|

||||

|

||||

You will also need [containerd v1.3.2](https://github.com/containerd/containerd) and the [CNI plugins v0.8.5](https://github.com/containernetworking/plugins)

|

||||

You will also need [containerd v1.3.5](https://github.com/containerd/containerd) and the [CNI plugins v0.8.5](https://github.com/containernetworking/plugins)

|

||||

|

||||

[faas-cli](https://github.com/openfaas/faas-cli) is optional, but recommended.

|

||||

|

||||

If you're using multipass, then allocate sufficient resources:

|

||||

|

||||

```sh

|

||||

multipass launch \

|

||||

--mem 4G \

|

||||

-c 2 \

|

||||

-n faasd

|

||||

|

||||

# Then access its shell

|

||||

multipass shell faasd

|

||||

```

|

||||

|

||||

### Get runc

|

||||

|

||||

```sh

|

||||

sudo apt update \

|

||||

&& sudo apt install -qy \

|

||||

runc \

|

||||

bridge-utils \

|

||||

make

|

||||

```

|

||||

|

||||

### Get faas-cli (optional)

|

||||

|

||||

Having `faas-cli` on your dev machine is useful for testing and debug.

|

||||

|

||||

```bash

|

||||

curl -sLS https://cli.openfaas.com | sudo sh

|

||||

```

|

||||

|

||||

#### Install the CNI plugins:

|

||||

|

||||

* For PC run `export ARCH=amd64`

|

||||

* For RPi/armhf run `export ARCH=arm`

|

||||

* For arm64 run `export ARCH=arm64`

|

||||

|

||||

Then run:

|

||||

|

||||

```sh

|

||||

export ARCH=amd64

|

||||

export CNI_VERSION=v0.8.5

|

||||

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/${CNI_VERSION}/cni-plugins-linux-${ARCH}-${CNI_VERSION}.tgz | sudo tar -xz -C /opt/cni/bin

|

||||

|

||||

# Make a config folder for CNI definitions

|

||||

sudo mkdir -p /etc/cni/net.d

|

||||

|

||||

# Make an initial loopback configuration

|

||||

sudo sh -c 'cat >/etc/cni/net.d/99-loopback.conf <<-EOF

|

||||

{

|

||||

"cniVersion": "0.3.1",

|

||||

"type": "loopback"

|

||||

}

|

||||

EOF'

|

||||

```

|

||||

|

||||

### Get containerd

|

||||

|

||||

You have three options - binaries for PC, binaries for armhf, or build from source.

|

||||

@ -25,8 +82,8 @@ You have three options - binaries for PC, binaries for armhf, or build from sour

|

||||

* Install containerd `x86_64` only

|

||||

|

||||

```sh

|

||||

export VER=1.3.2

|

||||

curl -sLSf https://github.com/containerd/containerd/releases/download/v$VER/containerd-$VER.linux-amd64.tar.gz > /tmp/containerd.tar.gz \

|

||||

export VER=1.3.5

|

||||

curl -sSL https://github.com/containerd/containerd/releases/download/v$VER/containerd-$VER-linux-amd64.tar.gz > /tmp/containerd.tar.gz \

|

||||

&& sudo tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

|

||||

containerd -version

|

||||

@ -37,7 +94,7 @@ containerd -version

|

||||

Building `containerd` on armhf is extremely slow, so I've provided binaries for you.

|

||||

|

||||

```sh

|

||||

curl -sSL https://github.com/alexellis/containerd-armhf/releases/download/v1.3.2/containerd.tgz | sudo tar -xvz --strip-components=2 -C /usr/local/bin/

|

||||

curl -sSL https://github.com/alexellis/containerd-armhf/releases/download/v1.3.5/containerd.tgz | sudo tar -xvz --strip-components=2 -C /usr/local/bin/

|

||||

```

|

||||

|

||||

* Or clone / build / install [containerd](https://github.com/containerd/containerd) from source:

|

||||

@ -49,7 +106,7 @@ containerd -version

|

||||

git clone https://github.com/containerd/containerd

|

||||

cd containerd

|

||||

git fetch origin --tags

|

||||

git checkout v1.3.2

|

||||

git checkout v1.3.5

|

||||

|

||||

make

|

||||

sudo make install

|

||||

@ -60,7 +117,11 @@ containerd -version

|

||||

#### Ensure containerd is running

|

||||

|

||||

```sh

|

||||

curl -sLS https://raw.githubusercontent.com/containerd/containerd/master/containerd.service > /tmp/containerd.service

|

||||

curl -sLS https://raw.githubusercontent.com/containerd/containerd/v1.3.5/containerd.service > /tmp/containerd.service

|

||||

|

||||

# Extend the timeouts for low-performance VMs

|

||||

echo "[Manager]" | tee -a /tmp/containerd.service

|

||||

echo "DefaultTimeoutStartSec=3m" | tee -a /tmp/containerd.service

|

||||

|

||||

sudo cp /tmp/containerd.service /lib/systemd/system/

|

||||

sudo systemctl enable containerd

|

||||

@ -69,7 +130,7 @@ sudo systemctl daemon-reload

|

||||

sudo systemctl restart containerd

|

||||

```

|

||||

|

||||

Or run ad-hoc:

|

||||

Or run ad-hoc. This step can be useful for exploring why containerd might fail to start.

|

||||

|

||||

```sh

|

||||

sudo containerd &

|

||||

@ -106,10 +167,10 @@ You may find alternative package names for CentOS and other Linux distributions.

|

||||

#### Install Go 1.13 (x86_64)

|

||||

|

||||

```sh

|

||||

curl -sSLf https://dl.google.com/go/go1.13.6.linux-amd64.tar.gz > go.tgz

|

||||

curl -sSLf https://dl.google.com/go/go1.13.6.linux-amd64.tar.gz > /tmp/go.tgz

|

||||

sudo rm -rf /usr/local/go/

|

||||

sudo mkdir -p /usr/local/go/

|

||||

sudo tar -xvf go.tgz -C /usr/local/go/ --strip-components=1

|

||||

sudo tar -xvf /tmp/go.tgz -C /usr/local/go/ --strip-components=1

|

||||

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

@ -120,8 +181,8 @@ go version

|

||||

You should also add the following to `~/.bash_profile`:

|

||||

|

||||

```sh

|

||||

export GOPATH=$HOME/go/

|

||||

export PATH=$PATH:/usr/local/go/bin/

|

||||

echo "export GOPATH=\$HOME/go/" | tee -a $HOME/.bash_profile

|

||||

echo "export PATH=\$PATH:/usr/local/go/bin/" | tee -a $HOME/.bash_profile

|

||||

```

|

||||

|

||||

#### Or on Raspberry Pi (armhf)

|

||||

@ -138,22 +199,6 @@ export PATH=$PATH:/usr/local/go/bin/

|

||||

go version

|

||||

```

|

||||

|

||||

#### Install the CNI plugins:

|

||||

|

||||

* For PC run `export ARCH=amd64`

|

||||

* For RPi/armhf run `export ARCH=arm`

|

||||

* For arm64 run `export ARCH=arm64`

|

||||

|

||||

Then run:

|

||||

|

||||

```sh

|

||||

export ARCH=amd64

|

||||

export CNI_VERSION=v0.8.5

|

||||

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/${CNI_VERSION}/cni-plugins-linux-${ARCH}-${CNI_VERSION}.tgz | sudo tar -xz -C /opt/cni/bin

|

||||

```

|

||||

|

||||

#### Clone faasd and its systemd unit files

|

||||

|

||||

```sh

|

||||

@ -168,32 +213,35 @@ git clone https://github.com/openfaas/faasd

|

||||

cd $GOPATH/src/github.com/openfaas/faasd

|

||||

cd faasd

|

||||

make local

|

||||

|

||||

# Install the binary

|

||||

sudo cp bin/faasd /usr/local/bin

|

||||

```

|

||||

|

||||

#### Build and run `faasd` (binaries)

|

||||

#### Or, download and run `faasd` (binaries)

|

||||

|

||||

```sh

|

||||

# For x86_64

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

export SUFFIX=""

|

||||

|

||||

# armhf

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd-armhf" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

export SUFFIX="-armhf"

|

||||

|

||||

# arm64

|

||||

sudo curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.0/faasd-arm64" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

export SUFFIX="-arm64"

|

||||

|

||||

# Then download

|

||||

curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.8.2/faasd$SUFFIX" \

|

||||

-o "/tmp/faasd" \

|

||||

&& chmod +x "/tmp/faasd"

|

||||

sudo mv /tmp/faasd /usr/local/bin/

|

||||

```

|

||||

|

||||

#### Install `faasd`

|

||||

|

||||

This step installs faasd as a systemd unit file, creates files in `/var/lib/faasd`, and writes out networking configuration for the CNI bridge networking plugin.

|

||||

|

||||

```sh

|

||||

# Install with systemd

|

||||

sudo cp bin/faasd /usr/local/bin

|

||||

sudo faasd install

|

||||

|

||||

2020/02/17 17:38:06 Writing to: "/var/lib/faasd/secrets/basic-auth-password"

|

||||

|

||||

141

docs/MULTIPASS.md

Normal file

141

docs/MULTIPASS.md

Normal file

@ -0,0 +1,141 @@

|

||||

# Tutorial - faasd with multipass

|

||||

|

||||

## Get up and running with your own faasd installation on your Mac

|

||||

|

||||

[multipass from Canonical](https://multipass.run) is like Docker Desktop, but for getting Ubuntu instead of a Docker daemon. It works on MacOS, Linux, and Windows with the same consistent UX. It's not fully open-source, and uses some proprietary add-ons / binaries, but is free to use.

|

||||

|

||||

For Linux using Ubuntu, you can install the packages directly, or use `sudo snap install multipass --classic` and follow this tutorial. For Raspberry Pi, [see my tutorial here](https://blog.alexellis.io/faasd-for-lightweight-serverless/).

|

||||

|

||||

John McCabe has also tested faasd on Windows with multipass, [see his tweet](https://twitter.com/mccabejohn/status/1221899154672308224).

|

||||

|

||||

## Use-case:

|

||||

|

||||

Try out [faasd](https://github.com/openfaas/faasd) in a single command using a cloud-config file to get a VM which has:

|

||||

|

||||

* port 22 for administration and

|

||||

* port 8080 for the OpenFaaS REST API.

|

||||

|

||||

|

||||

|

||||

The above screenshot is [from my tweet](https://twitter.com/alexellisuk/status/1221408788395298819/), feel free to comment there.

|

||||

|

||||

It took me about 2-3 minutes to run through everything after installing multipass.

|

||||

|

||||

## Let's start the tutorial

|

||||

|

||||

* Get [multipass.run](https://multipass.run)

|

||||

|

||||

* Get my cloud-config.txt file

|

||||

|

||||

```sh

|

||||

curl -sSLO https://raw.githubusercontent.com/openfaas/faasd/master/cloud-config.txt

|

||||

```

|

||||

|

||||

* Update the SSH key to match your own, edit `cloud-config.txt`:

|

||||

|

||||

Replace the 2nd line with the contents of `~/.ssh/id_rsa.pub`:

|

||||

|

||||

```

|

||||

ssh_authorized_keys:

|

||||

- ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC8Q/aUYUr3P1XKVucnO9mlWxOjJm+K01lHJR90MkHC9zbfTqlp8P7C3J26zKAuzHXOeF+VFxETRr6YedQKW9zp5oP7sN+F2gr/pO7GV3VmOqHMV7uKfyUQfq7H1aVzLfCcI7FwN2Zekv3yB7kj35pbsMa1Za58aF6oHRctZU6UWgXXbRxP+B04DoVU7jTstQ4GMoOCaqYhgPHyjEAS3DW0kkPW6HzsvJHkxvVcVlZ/wNJa1Ie/yGpzOzWIN0Ol0t2QT/RSWOhfzO1A2P0XbPuZ04NmriBonO9zR7T1fMNmmtTuK7WazKjQT3inmYRAqU6pe8wfX8WIWNV7OowUjUsv alex@alexr.local

|

||||

```

|

||||

|

||||

* Boot the VM

|

||||

|

||||

```sh

|

||||

multipass launch --cloud-init cloud-config.txt --name faasd

|

||||

```

|

||||

|

||||

* Get the VM's IP and connect with `ssh`

|

||||

|

||||

```sh

|

||||

multipass info faasd

|

||||

Name: faasd

|

||||

State: Running

|

||||

IPv4: 192.168.64.14

|

||||

Release: Ubuntu 18.04.3 LTS

|

||||

Image hash: a720c34066dc (Ubuntu 18.04 LTS)

|

||||

Load: 0.79 0.19 0.06

|

||||

Disk usage: 1.1G out of 4.7G

|

||||

Memory usage: 145.6M out of 985.7M

|

||||

```

|

||||

|

||||

Set the variable `IP`:

|

||||

|

||||

```

|

||||

export IP="192.168.64.14"

|

||||

```

|

||||

|

||||

You can also try to use `jq` to get the IP into a variable:

|

||||

|

||||

```sh

|

||||

export IP=$(multipass info faasd --format json| jq '.info.faasd.ipv4[0]' | tr -d '\"')

|

||||

```

|

||||

|

||||

Connect to the IP listed:

|

||||

|

||||

```sh

|

||||

ssh ubuntu@$IP

|

||||

```

|

||||

|

||||

Log out once you know it works.

|

||||

|

||||

* Let's capture the authentication password into a file for use with `faas-cli`

|

||||

|

||||

```

|

||||

ssh ubuntu@192.168.64.14 "sudo cat /var/lib/faasd/secrets/basic-auth-password" > basic-auth-password

|

||||

```

|

||||

|

||||

## Try faasd (OpenFaaS)

|

||||

|

||||

* Login from your laptop (the host)

|

||||

|

||||

```

|

||||

export OPENFAAS_URL=http://$IP:8080

|

||||

cat basic-auth-password | faas-cli login -s

|

||||

```

|

||||

|

||||

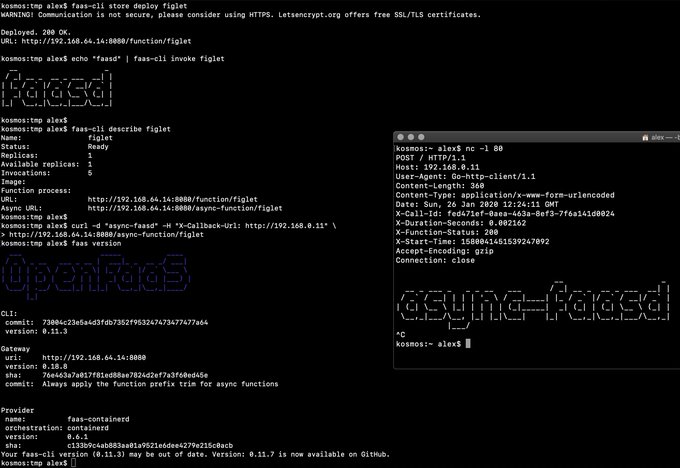

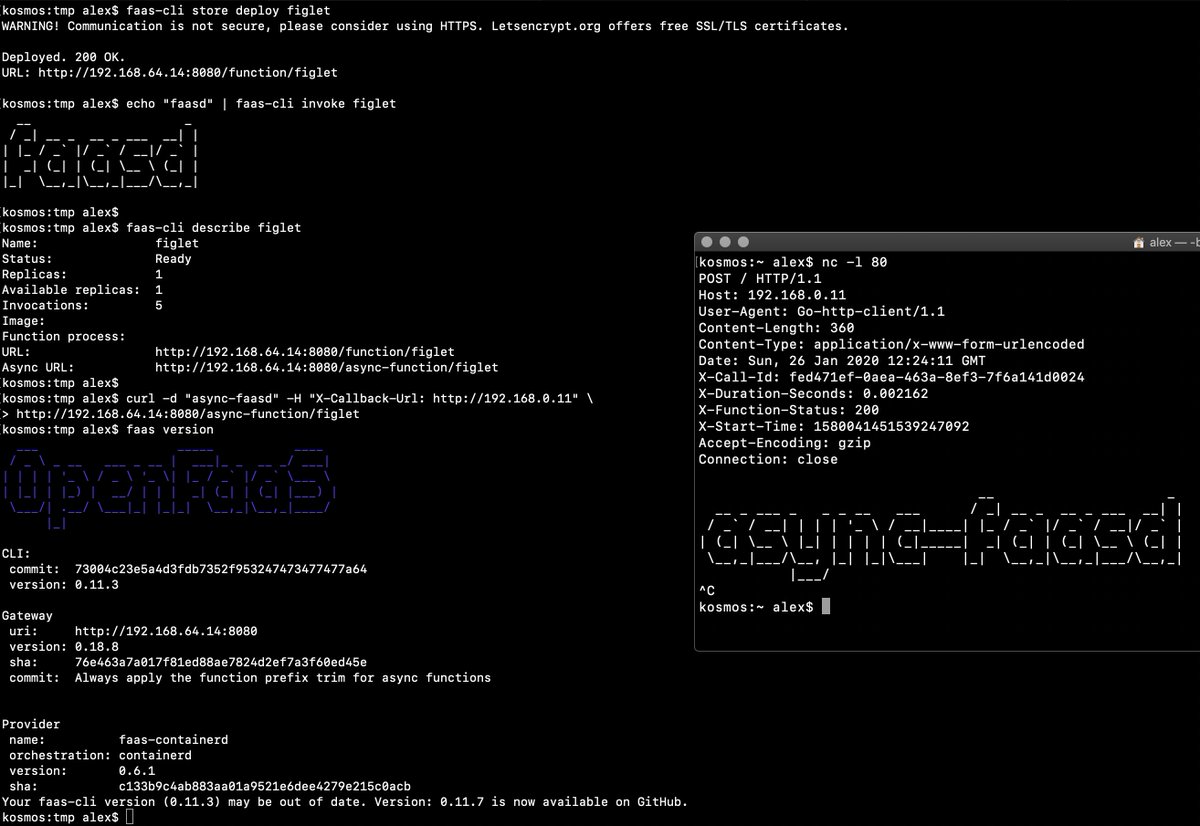

* Deploy a function and invoke it

|

||||

|

||||

```

|

||||

faas-cli store deploy figlet --env write_timeout=1s

|

||||

echo "faasd" | faas-cli invoke figlet

|

||||

|

||||

faas-cli describe figlet

|

||||

|

||||

# Run async

|

||||

curl -i -d "faasd-async" $OPENFAAS_URL/async-function/figlet

|

||||

|

||||

# Run async with a callback

|

||||

|

||||

curl -i -d "faasd-async" -H "X-Callback-Url: http://some-request-bin.com/path" $OPENFAAS_URL/async-function/figlet

|

||||

```

|

||||

|

||||

You can also checkout the other store functions: `faas-cli store list`

|

||||

|

||||

* Try the UI

|

||||

|

||||

Head over to the UI from your laptop and remember that your password is in the `basic-auth-password` file. The username is `admin.:

|

||||

|

||||

```

|

||||

echo http://$IP:8080

|

||||

```

|

||||

|

||||

* Stop/start the instance

|

||||

|

||||

```sh

|

||||

multipass stop faasd

|

||||

```

|

||||

|

||||

* Delete, if you want to:

|

||||

|

||||

```

|

||||

multipass delete --purge faasd

|

||||

```

|

||||

|

||||

You now have a faasd appliance on your Mac. You can also use this cloud-init file with public cloud like AWS or DigitalOcean.

|

||||

|

||||

* If you want a public IP for your faasd VM, then just head over to [inlets.dev](https://inlets.dev/)

|

||||

* Try my more complete walk-through / tutorial with Raspberry Pi, or run the same steps on your multipass VM, including how to develop your own functions and services - https://blog.alexellis.io/faasd-for-lightweight-serverless/

|

||||

* You might also like [Building containers without Docker](https://blog.alexellis.io/building-containers-without-docker/)

|

||||

* Star/fork [faasd](https://github.com/openfaas/faasd) on GitHub

|

||||

3

docs/bootstrap/.gitignore

vendored

Normal file

3

docs/bootstrap/.gitignore

vendored

Normal file

@ -0,0 +1,3 @@

|

||||

/.terraform/

|

||||

/terraform.tfstate

|

||||

/terraform.tfstate.backup

|

||||

20

docs/bootstrap/README.md

Normal file

20

docs/bootstrap/README.md