mirror of

https://github.com/openfaas/faasd.git

synced 2025-06-18 12:06:36 +00:00

Compare commits

119 Commits

bidirectio

...

0.9.2

| Author | SHA1 | Date | |

|---|---|---|---|

| 5b92e7793d | |||

| 88f1aa0433 | |||

| 2b9efd29a0 | |||

| db5312158c | |||

| 26debca616 | |||

| 50de0f34bb | |||

| d64edeb648 | |||

| 42b9cc6b71 | |||

| 25c553a87c | |||

| 8bc39f752e | |||

| cbff6fa8f6 | |||

| 3e29408518 | |||

| 04f1807d92 | |||

| 35e017b526 | |||

| e54da61283 | |||

| 84353d0cae | |||

| e33a60862d | |||

| 7b67ff22e6 | |||

| 19abc9f7b9 | |||

| 480f566819 | |||

| cece6cf1ef | |||

| 22882e2643 | |||

| 667d74aaf7 | |||

| 9dcdbfb7e3 | |||

| 3a9b81200e | |||

| 734425de25 | |||

| 70e7e0d25a | |||

| be8574ecd0 | |||

| a0110b3019 | |||

| 87c71b090f | |||

| dc8667d36a | |||

| 137d199cb5 | |||

| 560c295eb0 | |||

| 93325b713e | |||

| 2307fc71c5 | |||

| 853830c018 | |||

| 262770a0b7 | |||

| 0efb6d492f | |||

| 27cfe465ca | |||

| d6c4ebaf96 | |||

| e9d1423315 | |||

| 4bca5c36a5 | |||

| 10e7a2f07c | |||

| 4775a9a77c | |||

| e07186ed5b | |||

| 2454c2a807 | |||

| 8bd2ba5334 | |||

| c379b0ebcc | |||

| 226a20c362 | |||

| 02c9dcf74d | |||

| 0b88fc232d | |||

| fcd1c9ab54 | |||

| 592f3d3cc0 | |||

| b06364c3f4 | |||

| 75fd07797c | |||

| 65c2cb0732 | |||

| 44df1cef98 | |||

| 881f5171ee | |||

| 970015ac85 | |||

| 283e8ed2c1 | |||

| d49011702b | |||

| eb369fbb16 | |||

| 040b426a19 | |||

| 251cb2d08a | |||

| 5c48ac1a70 | |||

| 7c166979c9 | |||

| 36843ad1d4 | |||

| 3bc041ba04 | |||

| dd3f9732b4 | |||

| 6c10d18f59 | |||

| 969fc566e1 | |||

| a4710db664 | |||

| df2de7ee5c | |||

| 2d8b2b1f73 | |||

| 6e5bc27d9a | |||

| 2eb1df9517 | |||

| c133b9c4ab | |||

| f09028e451 | |||

| bacf8ebad5 | |||

| d551721649 | |||

| 42e9c91ee9 | |||

| cda1fe78b1 | |||

| a3392634a7 | |||

| 95e278b29a | |||

| d802ba70c1 | |||

| cd76ff3ebc | |||

| ed5110de30 | |||

| 2f3ba1335c | |||

| 24e065965f | |||

| fd2ee55f9f | |||

| d135999d3b | |||

| 3068d03279 | |||

| fd4f53fe15 | |||

| 4b93ccba3f | |||

| e6b814fd60 | |||

| 06890cddb9 | |||

| 40da0a35c3 | |||

| 0c0d05b2ea | |||

| af46f34003 | |||

| 2f7269fc97 | |||

| 1f56c39675 | |||

| 7152b170bb | |||

| 47955954eb | |||

| 7f672f006a | |||

| 20ec76bf74 | |||

| 04d1688bfb | |||

| a8f514f7d6 | |||

| 502d3fdecc | |||

| 5d098c9cb7 | |||

| 0935dc6867 | |||

| e77d05ec94 | |||

| 7ab69b5317 | |||

| 098baba7cc | |||

| 9d688b9ea6 | |||

| 1458a2f2fb | |||

| c18a038062 | |||

| af0555a85b | |||

| 2ff8646669 | |||

| d785bebf4c |

2

.gitattributes

vendored

Normal file

2

.gitattributes

vendored

Normal file

@ -0,0 +1,2 @@

|

||||

vendor/** linguist-generated=true

|

||||

Gopkg.lock linguist-generated=true

|

||||

1

.github/CODEOWNERS

vendored

Normal file

1

.github/CODEOWNERS

vendored

Normal file

@ -0,0 +1 @@

|

||||

@alexellis

|

||||

41

.github/ISSUE_TEMPLATE.md

vendored

Normal file

41

.github/ISSUE_TEMPLATE.md

vendored

Normal file

@ -0,0 +1,41 @@

|

||||

<!--- Provide a general summary of the issue in the Title above -->

|

||||

|

||||

## Expected Behaviour

|

||||

<!--- If you're describing a bug, tell us what should happen -->

|

||||

<!--- If you're suggesting a change/improvement, tell us how it should work -->

|

||||

|

||||

## Current Behaviour

|

||||

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

|

||||

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

|

||||

|

||||

## Possible Solution

|

||||

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

|

||||

<!--- or ideas how to implement the addition or change -->

|

||||

|

||||

## Steps to Reproduce (for bugs)

|

||||

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

|

||||

<!--- reproduce this bug. Include code to reproduce, if relevant -->

|

||||

1.

|

||||

2.

|

||||

3.

|

||||

4.

|

||||

|

||||

## Context

|

||||

<!--- How has this issue affected you? What are you trying to accomplish? -->

|

||||

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

|

||||

|

||||

## Your Environment

|

||||

|

||||

* OS and architecture:

|

||||

|

||||

* Versions:

|

||||

|

||||

```sh

|

||||

go version

|

||||

|

||||

containerd -version

|

||||

|

||||

uname -a

|

||||

|

||||

cat /etc/os-release

|

||||

```

|

||||

40

.github/PULL_REQUEST_TEMPLATE.md

vendored

Normal file

40

.github/PULL_REQUEST_TEMPLATE.md

vendored

Normal file

@ -0,0 +1,40 @@

|

||||

<!--- Provide a general summary of your changes in the Title above -->

|

||||

|

||||

## Description

|

||||

<!--- Describe your changes in detail -->

|

||||

|

||||

## Motivation and Context

|

||||

<!--- Why is this change required? What problem does it solve? -->

|

||||

<!--- If it fixes an open issue, please link to the issue here. -->

|

||||

- [ ] I have raised an issue to propose this change **this is required**

|

||||

|

||||

|

||||

## How Has This Been Tested?

|

||||

<!--- Please describe in detail how you tested your changes. -->

|

||||

<!--- Include details of your testing environment, and the tests you ran to -->

|

||||

<!--- see how your change affects other areas of the code, etc. -->

|

||||

|

||||

|

||||

## Types of changes

|

||||

<!--- What types of changes does your code introduce? Put an `x` in all the boxes that apply: -->

|

||||

- [ ] Bug fix (non-breaking change which fixes an issue)

|

||||

- [ ] New feature (non-breaking change which adds functionality)

|

||||

- [ ] Breaking change (fix or feature that would cause existing functionality to change)

|

||||

|

||||

## Checklist:

|

||||

|

||||

Commits:

|

||||

|

||||

- [ ] I've read the [CONTRIBUTION](https://github.com/openfaas/faas/blob/master/CONTRIBUTING.md) guide

|

||||

- [ ] My commit message has a body and describe how this was tested and why it is required.

|

||||

- [ ] I have signed-off my commits with `git commit -s` for the Developer Certificate of Origin (DCO)

|

||||

|

||||

Code:

|

||||

|

||||

- [ ] My code follows the code style of this project.

|

||||

- [ ] I have added tests to cover my changes.

|

||||

|

||||

Docs:

|

||||

|

||||

- [ ] My change requires a change to the documentation.

|

||||

- [ ] I have updated the documentation accordingly.

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@ -5,3 +5,6 @@ hosts

|

||||

|

||||

basic-auth-user

|

||||

basic-auth-password

|

||||

/bin

|

||||

/secrets

|

||||

.vscode

|

||||

|

||||

17

.travis.yml

17

.travis.yml

@ -1,9 +1,20 @@

|

||||

sudo: required

|

||||

language: go

|

||||

|

||||

go:

|

||||

- '1.12'

|

||||

- '1.13'

|

||||

|

||||

addons:

|

||||

apt:

|

||||

packages:

|

||||

- runc

|

||||

|

||||

script:

|

||||

- make test

|

||||

- make dist

|

||||

- make prepare-test

|

||||

- make test-e2e

|

||||

|

||||

deploy:

|

||||

provider: releases

|

||||

api_key:

|

||||

@ -16,5 +27,5 @@ deploy:

|

||||

on:

|

||||

tags: true

|

||||

|

||||

env:

|

||||

- GO111MODULE=off

|

||||

env: GO111MODULE=off

|

||||

|

||||

|

||||

231

Gopkg.lock

generated

231

Gopkg.lock

generated

@ -13,7 +13,7 @@

|

||||

version = "v0.4.14"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:b28f788c0be42a6d26f07b282c5ff5f814ab7ad5833810ef0bc5f56fb9bedf11"

|

||||

digest = "1:f06a14a8b60a7a9cdbf14ed52272faf4ff5de4ed7c784ff55b64995be98ac59f"

|

||||

name = "github.com/Microsoft/hcsshim"

|

||||

packages = [

|

||||

".",

|

||||

@ -33,28 +33,44 @@

|

||||

"internal/timeout",

|

||||

"internal/vmcompute",

|

||||

"internal/wclayer",

|

||||

"osversion",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "9e921883ac929bbe515b39793ece99ce3a9d7706"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:74860eb071d52337d67e9ffd6893b29affebd026505aa917ec23131576a91a77"

|

||||

digest = "1:d7086e6a64a9e4fa54aaf56ce42ead0be1300b0285604c4d306438880db946ad"

|

||||

name = "github.com/alexellis/go-execute"

|

||||

packages = ["pkg/v1"]

|

||||

pruneopts = "UT"

|

||||

revision = "961405ea754427780f2151adff607fa740d377f7"

|

||||

version = "0.3.0"

|

||||

revision = "8697e4e28c5e3ce441ff8b2b6073035606af2fe9"

|

||||

version = "0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:6076d857867a70e87dd1994407deb142f27436f1293b13e75cc053192d14eb0c"

|

||||

digest = "1:345f6fa182d72edfa3abc493881c3fa338a464d93b1e2169cda9c822fde31655"

|

||||

name = "github.com/alexellis/k3sup"

|

||||

packages = ["pkg/env"]

|

||||

pruneopts = "UT"

|

||||

revision = "f9a4adddc732742a9ee7962609408fb0999f2d7b"

|

||||

version = "0.7.1"

|

||||

revision = "629c0bc6b50f71ab93a1fbc8971a5bd05dc581eb"

|

||||

version = "0.9.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:386ca0ac781cc1b630b3ed21725759770174140164b3faf3810e6ed6366a970b"

|

||||

branch = "master"

|

||||

digest = "1:cda177c07c87c648b1aaa37290717064a86d337a5dc6b317540426872d12de52"

|

||||

name = "github.com/compose-spec/compose-go"

|

||||

packages = [

|

||||

"envfile",

|

||||

"interpolation",

|

||||

"loader",

|

||||

"schema",

|

||||

"template",

|

||||

"types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "36d8ce368e05d2ae83c86b2987f20f7c20d534a6"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cf83a14c8042951b0dcd74758fc32258111ecc7838cbdf5007717172cab9ca9b"

|

||||

name = "github.com/containerd/containerd"

|

||||

packages = [

|

||||

".",

|

||||

@ -102,6 +118,7 @@

|

||||

"remotes/docker/schema1",

|

||||

"rootfs",

|

||||

"runtime/linux/runctypes",

|

||||

"runtime/v2/logging",

|

||||

"runtime/v2/runc/options",

|

||||

"snapshots",

|

||||

"snapshots/proxy",

|

||||

@ -113,10 +130,11 @@

|

||||

version = "v1.3.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:7e9da25c7a952c63e31ed367a88eede43224b0663b58eb452870787d8ddb6c70"

|

||||

digest = "1:e4414857969cfbe45c7dab0a012aad4855bf7167c25d672a182cb18676424a0c"

|

||||

name = "github.com/containerd/continuity"

|

||||

packages = [

|

||||

"fs",

|

||||

"pathdriver",

|

||||

"syscallx",

|

||||

"sysx",

|

||||

]

|

||||

@ -130,6 +148,14 @@

|

||||

pruneopts = "UT"

|

||||

revision = "bda0ff6ed73c67bfb5e62bc9c697f146b7fd7f13"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:2301a9a859e3b0946e2ddd6961ba6faf6857e6e68bc9293db758dbe3b17cc35e"

|

||||

name = "github.com/containerd/go-cni"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "c154a49e2c754b83ebfb12ebf1362213b94d23e6"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:6d66a41dbbc6819902f1589d0550bc01c18032c0a598a7cd656731e6df73861b"

|

||||

name = "github.com/containerd/ttrpc"

|

||||

@ -144,6 +170,42 @@

|

||||

pruneopts = "UT"

|

||||

revision = "a93fcdb778cd272c6e9b3028b2f42d813e785d40"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:1a07bbfee1d0534e8dda4773948e6dcd3a061ea7ab047ce04619476946226483"

|

||||

name = "github.com/containernetworking/cni"

|

||||

packages = [

|

||||

"libcni",

|

||||

"pkg/invoke",

|

||||

"pkg/types",

|

||||

"pkg/types/020",

|

||||

"pkg/types/current",

|

||||

"pkg/version",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "4cfb7b568922a3c79a23e438dc52fe537fc9687e"

|

||||

version = "v0.7.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:bcf36df8d43860bfde913d008301aef27c6e9a303582118a837c4a34c0d18167"

|

||||

name = "github.com/coreos/go-systemd"

|

||||

packages = ["journal"]

|

||||

pruneopts = "UT"

|

||||

revision = "d3cd4ed1dbcf5835feba465b180436db54f20228"

|

||||

version = "v21"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:92ebc9c068ab8e3fff03a58694ee33830964f6febd0130069aadce328802de14"

|

||||

name = "github.com/docker/cli"

|

||||

packages = [

|

||||

"cli/config",

|

||||

"cli/config/configfile",

|

||||

"cli/config/credentials",

|

||||

"cli/config/types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "99c5edceb48d64c1aa5d09b8c9c499d431d98bb9"

|

||||

version = "v19.03.5"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e495f9f1fb2bae55daeb76e099292054fe1f734947274b3cfc403ccda595d55a"

|

||||

name = "github.com/docker/distribution"

|

||||

@ -155,6 +217,38 @@

|

||||

pruneopts = "UT"

|

||||

revision = "0d3efadf0154c2b8a4e7b6621fff9809655cc580"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:10f9c98f627e9697ec23b7973a683324f1d901dd9bace4a71405c0b2ec554303"

|

||||

name = "github.com/docker/docker"

|

||||

packages = [

|

||||

"pkg/homedir",

|

||||

"pkg/idtools",

|

||||

"pkg/mount",

|

||||

"pkg/system",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "ea84732a77251e0d7af278e2b7df1d6a59fca46b"

|

||||

version = "v19.03.5"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:9f3f49b4e32d3da2dd6ed07cc568627b53cc80205c0dcf69f4091f027416cb60"

|

||||

name = "github.com/docker/docker-credential-helpers"

|

||||

packages = [

|

||||

"client",

|

||||

"credentials",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "54f0238b6bf101fc3ad3b34114cb5520beb562f5"

|

||||

version = "v0.6.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:ade935c55cd6d0367c843b109b09c9d748b1982952031414740750fdf94747eb"

|

||||

name = "github.com/docker/go-connections"

|

||||

packages = ["nat"]

|

||||

pruneopts = "UT"

|

||||

revision = "7395e3f8aa162843a74ed6d48e79627d9792ac55"

|

||||

version = "v0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:0938aba6e09d72d48db029d44dcfa304851f52e2d67cda920436794248e92793"

|

||||

name = "github.com/docker/go-events"

|

||||

@ -162,6 +256,14 @@

|

||||

pruneopts = "UT"

|

||||

revision = "9461782956ad83b30282bf90e31fa6a70c255ba9"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e95ef557dc3120984bb66b385ae01b4bb8ff56bcde28e7b0d1beed0cccc4d69f"

|

||||

name = "github.com/docker/go-units"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "519db1ee28dcc9fd2474ae59fca29a810482bfb1"

|

||||

version = "v0.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:fa6faf4a2977dc7643de38ae599a95424d82f8ffc184045510737010a82c4ecd"

|

||||

name = "github.com/gogo/googleapis"

|

||||

@ -204,6 +306,22 @@

|

||||

revision = "6c65a5562fc06764971b7c5d05c76c75e84bdbf7"

|

||||

version = "v1.3.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cbec35fe4d5a4fba369a656a8cd65e244ea2c743007d8f6c1ccb132acf9d1296"

|

||||

name = "github.com/gorilla/mux"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "00bdffe0f3c77e27d2cf6f5c70232a2d3e4d9c15"

|

||||

version = "v1.7.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:1a7059d684f8972987e4b6f0703083f207d63f63da0ea19610ef2e6bb73db059"

|

||||

name = "github.com/imdario/mergo"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "66f88b4ae75f5edcc556623b96ff32c06360fbb7"

|

||||

version = "v0.3.9"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:870d441fe217b8e689d7949fef6e43efbc787e50f200cb1e70dbca9204a1d6be"

|

||||

name = "github.com/inconshreveable/mousetrap"

|

||||

@ -220,6 +338,22 @@

|

||||

revision = "f55edac94c9bbba5d6182a4be46d86a2c9b5b50e"

|

||||

version = "v1.0.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:528e84b49342ec33c96022f8d7dd4c8bd36881798afbb44e2744bda0ec72299c"

|

||||

name = "github.com/mattn/go-shellwords"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "28e4fdf351f0744b1249317edb45e4c2aa7a5e43"

|

||||

version = "v1.0.10"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:dd34285411cd7599f1fe588ef9451d5237095963ecc85c1212016c6769866306"

|

||||

name = "github.com/mitchellh/mapstructure"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "20e21c67c4d0e1b4244f83449b7cdd10435ee998"

|

||||

version = "v1.3.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:906eb1ca3c8455e447b99a45237b2b9615b665608fd07ad12cce847dd9a1ec43"

|

||||

name = "github.com/morikuni/aec"

|

||||

@ -265,6 +399,29 @@

|

||||

pruneopts = "UT"

|

||||

revision = "29686dbc5559d93fb1ef402eeda3e35c38d75af4"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cdf3df431e70077f94e14a99305808e3d13e96262b4686154970f448f7248842"

|

||||

name = "github.com/openfaas/faas"

|

||||

packages = ["gateway/requests"]

|

||||

pruneopts = "UT"

|

||||

revision = "80b6976c106370a7081b2f8e9099a6ea9638e1f3"

|

||||

version = "0.18.10"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:4d972c6728f8cbaded7d2ee6349fbe5f9278cabcd51d1ecad97b2e79c72bea9d"

|

||||

name = "github.com/openfaas/faas-provider"

|

||||

packages = [

|

||||

".",

|

||||

"auth",

|

||||

"httputil",

|

||||

"logs",

|

||||

"proxy",

|

||||

"types",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "db19209aa27f42a9cf6a23448fc2b8c9cc4fbb5d"

|

||||

version = "v0.15.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:cf31692c14422fa27c83a05292eb5cbe0fb2775972e8f1f8446a71549bd8980b"

|

||||

name = "github.com/pkg/errors"

|

||||

@ -314,15 +471,15 @@

|

||||

revision = "d98352740cb2c55f81556b63d4a1ec64c5a319c2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:2d9d06cb9d46dacfdbb45f8575b39fc0126d083841a29d4fbf8d97708f43107e"

|

||||

digest = "1:1314b5ef1c0b25257ea02e454291bf042478a48407cfe3ffea7e20323bbf5fdf"

|

||||

name = "github.com/vishvananda/netlink"

|

||||

packages = [

|

||||

".",

|

||||

"nl",

|

||||

]

|

||||

pruneopts = "UT"

|

||||

revision = "a2ad57a690f3caf3015351d2d6e1c0b95c349752"

|

||||

version = "v1.0.0"

|

||||

revision = "f049be6f391489d3f374498fe0c8df8449258372"

|

||||

version = "v1.1.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@ -332,6 +489,30 @@

|

||||

pruneopts = "UT"

|

||||

revision = "0a2b9b5464df8343199164a0321edf3313202f7e"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:87fe9bca786484cef53d52adeec7d1c52bc2bfbee75734eddeb75fc5c7023871"

|

||||

name = "github.com/xeipuuv/gojsonpointer"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "02993c407bfbf5f6dae44c4f4b1cf6a39b5fc5bb"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:dc6a6c28ca45d38cfce9f7cb61681ee38c5b99ec1425339bfc1e1a7ba769c807"

|

||||

name = "github.com/xeipuuv/gojsonreference"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "bd5ef7bd5415a7ac448318e64f11a24cd21e594b"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:a8a0ed98532819a3b0dc5cf3264a14e30aba5284b793ba2850d6f381ada5f987"

|

||||

name = "github.com/xeipuuv/gojsonschema"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "82fcdeb203eb6ab2a67d0a623d9c19e5e5a64927"

|

||||

version = "v1.2.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:aed53a5fa03c1270457e331cf8b7e210e3088a2278fec552c5c5d29c1664e161"

|

||||

name = "go.opencensus.io"

|

||||

@ -460,22 +641,48 @@

|

||||

revision = "6eaf6f47437a6b4e2153a190160ef39a92c7eceb"

|

||||

version = "v1.23.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:d7f1bd887dc650737a421b872ca883059580e9f8314d601f88025df4f4802dce"

|

||||

name = "gopkg.in/yaml.v2"

|

||||

packages = ["."]

|

||||

pruneopts = "UT"

|

||||

revision = "0b1645d91e851e735d3e23330303ce81f70adbe3"

|

||||

version = "v2.3.0"

|

||||

|

||||

[solve-meta]

|

||||

analyzer-name = "dep"

|

||||

analyzer-version = 1

|

||||

input-imports = [

|

||||

"github.com/alexellis/go-execute/pkg/v1",

|

||||

"github.com/alexellis/k3sup/pkg/env",

|

||||

"github.com/compose-spec/compose-go/loader",

|

||||

"github.com/compose-spec/compose-go/types",

|

||||

"github.com/containerd/containerd",

|

||||

"github.com/containerd/containerd/cio",

|

||||

"github.com/containerd/containerd/containers",

|

||||

"github.com/containerd/containerd/errdefs",

|

||||

"github.com/containerd/containerd/namespaces",

|

||||

"github.com/containerd/containerd/oci",

|

||||

"github.com/containerd/containerd/remotes",

|

||||

"github.com/containerd/containerd/remotes/docker",

|

||||

"github.com/containerd/containerd/runtime/v2/logging",

|

||||

"github.com/containerd/go-cni",

|

||||

"github.com/coreos/go-systemd/journal",

|

||||

"github.com/docker/cli/cli/config",

|

||||

"github.com/docker/cli/cli/config/configfile",

|

||||

"github.com/docker/distribution/reference",

|

||||

"github.com/gorilla/mux",

|

||||

"github.com/morikuni/aec",

|

||||

"github.com/opencontainers/runtime-spec/specs-go",

|

||||

"github.com/openfaas/faas-provider",

|

||||

"github.com/openfaas/faas-provider/logs",

|

||||

"github.com/openfaas/faas-provider/proxy",

|

||||

"github.com/openfaas/faas-provider/types",

|

||||

"github.com/openfaas/faas/gateway/requests",

|

||||

"github.com/pkg/errors",

|

||||

"github.com/sethvargo/go-password/password",

|

||||

"github.com/spf13/cobra",

|

||||

"github.com/spf13/pflag",

|

||||

"github.com/vishvananda/netlink",

|

||||

"github.com/vishvananda/netns",

|

||||

"golang.org/x/sys/unix",

|

||||

|

||||

30

Gopkg.toml

30

Gopkg.toml

@ -1,3 +1,7 @@

|

||||

[prune]

|

||||

go-tests = true

|

||||

unused-packages = true

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/containerd/containerd"

|

||||

version = "1.3.2"

|

||||

@ -12,16 +16,32 @@

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/alexellis/k3sup"

|

||||

version = "0.7.1"

|

||||

version = "0.9.3"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/alexellis/go-execute"

|

||||

version = "0.3.0"

|

||||

version = "0.4.0"

|

||||

|

||||

[prune]

|

||||

go-tests = true

|

||||

unused-packages = true

|

||||

[[constraint]]

|

||||

name = "github.com/gorilla/mux"

|

||||

version = "1.7.3"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/openfaas/faas"

|

||||

version = "0.18.7"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/sethvargo/go-password"

|

||||

version = "0.1.3"

|

||||

|

||||

[[constraint]]

|

||||

branch = "master"

|

||||

name = "github.com/containerd/go-cni"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/openfaas/faas-provider"

|

||||

version = "v0.15.1"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/docker/cli"

|

||||

version = "19.3.5"

|

||||

|

||||

41

Makefile

41

Makefile

@ -1,6 +1,9 @@

|

||||

Version := $(shell git describe --tags --dirty)

|

||||

GitCommit := $(shell git rev-parse HEAD)

|

||||

LDFLAGS := "-s -w -X main.Version=$(Version) -X main.GitCommit=$(GitCommit)"

|

||||

CONTAINERD_VER := 1.3.4

|

||||

CNI_VERSION := v0.8.6

|

||||

ARCH := amd64

|

||||

|

||||

.PHONY: all

|

||||

all: local

|

||||

@ -8,8 +11,46 @@ all: local

|

||||

local:

|

||||

CGO_ENABLED=0 GOOS=linux go build -o bin/faasd

|

||||

|

||||

.PHONY: test

|

||||

test:

|

||||

CGO_ENABLED=0 GOOS=linux go test -ldflags $(LDFLAGS) ./...

|

||||

|

||||

.PHONY: dist

|

||||

dist:

|

||||

CGO_ENABLED=0 GOOS=linux go build -ldflags $(LDFLAGS) -a -installsuffix cgo -o bin/faasd

|

||||

CGO_ENABLED=0 GOOS=linux GOARCH=arm GOARM=7 go build -ldflags $(LDFLAGS) -a -installsuffix cgo -o bin/faasd-armhf

|

||||

CGO_ENABLED=0 GOOS=linux GOARCH=arm64 go build -ldflags $(LDFLAGS) -a -installsuffix cgo -o bin/faasd-arm64

|

||||

|

||||

.PHONY: prepare-test

|

||||

prepare-test:

|

||||

curl -sLSf https://github.com/containerd/containerd/releases/download/v$(CONTAINERD_VER)/containerd-$(CONTAINERD_VER).linux-amd64.tar.gz > /tmp/containerd.tar.gz && sudo tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

curl -SLfs https://raw.githubusercontent.com/containerd/containerd/v1.3.2/containerd.service | sudo tee /etc/systemd/system/containerd.service

|

||||

sudo systemctl daemon-reload && sudo systemctl start containerd

|

||||

sudo /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

sudo mkdir -p /opt/cni/bin

|

||||

curl -sSL https://github.com/containernetworking/plugins/releases/download/$(CNI_VERSION)/cni-plugins-linux-$(ARCH)-$(CNI_VERSION).tgz | sudo tar -xz -C /opt/cni/bin

|

||||

sudo cp $(GOPATH)/src/github.com/openfaas/faasd/bin/faasd /usr/local/bin/

|

||||

cd $(GOPATH)/src/github.com/openfaas/faasd/ && sudo /usr/local/bin/faasd install

|

||||

sudo systemctl status -l containerd --no-pager

|

||||

sudo journalctl -u faasd-provider --no-pager

|

||||

sudo systemctl status -l faasd-provider --no-pager

|

||||

sudo systemctl status -l faasd --no-pager

|

||||

curl -sSLf https://cli.openfaas.com | sudo sh

|

||||

echo "Sleeping for 2m" && sleep 120 && sudo journalctl -u faasd --no-pager

|

||||

|

||||

.PHONY: test-e2e

|

||||

test-e2e:

|

||||

sudo cat /var/lib/faasd/secrets/basic-auth-password | /usr/local/bin/faas-cli login --password-stdin

|

||||

/usr/local/bin/faas-cli store deploy figlet --env write_timeout=1s --env read_timeout=1s --label testing=true

|

||||

sleep 5

|

||||

/usr/local/bin/faas-cli list -v

|

||||

/usr/local/bin/faas-cli describe figlet | grep testing

|

||||

uname | /usr/local/bin/faas-cli invoke figlet

|

||||

uname | /usr/local/bin/faas-cli invoke figlet --async

|

||||

sleep 10

|

||||

/usr/local/bin/faas-cli list -v

|

||||

/usr/local/bin/faas-cli remove figlet

|

||||

sleep 3

|

||||

/usr/local/bin/faas-cli list

|

||||

sleep 1

|

||||

/usr/local/bin/faas-cli logs figlet --follow=false | grep Forking

|

||||

|

||||

264

README.md

264

README.md

@ -1,158 +1,168 @@

|

||||

# faasd - serverless with containerd

|

||||

# faasd - lightweight OSS serverless 🐳

|

||||

|

||||

[](https://travis-ci.com/alexellis/faasd)

|

||||

[](https://travis-ci.com/openfaas/faasd)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://www.openfaas.com)

|

||||

|

||||

|

||||

faasd is a Golang supervisor that bundles OpenFaaS for use with containerd instead of a container orchestrator like Kubernetes or Docker Swarm.

|

||||

faasd is the same OpenFaaS experience and ecosystem, but without Kubernetes. Functions and microservices can be deployed anywhere with reduced overheads whilst retaining the portability of containers and cloud-native tooling such as containerd and CNI.

|

||||

|

||||

## About faasd:

|

||||

## About faasd

|

||||

|

||||

* faasd is a single Golang binary

|

||||

* faasd is multi-arch, so works on `x86_64`, armhf and arm64

|

||||

* faasd downloads, starts and supervises the core components to run OpenFaaS

|

||||

* is a single Golang binary

|

||||

* can be set-up and left alone to run your applications

|

||||

* is multi-arch, so works on Intel `x86_64` and ARM out the box

|

||||

* uses the same core components and ecosystem of OpenFaaS

|

||||

|

||||

|

||||

|

||||

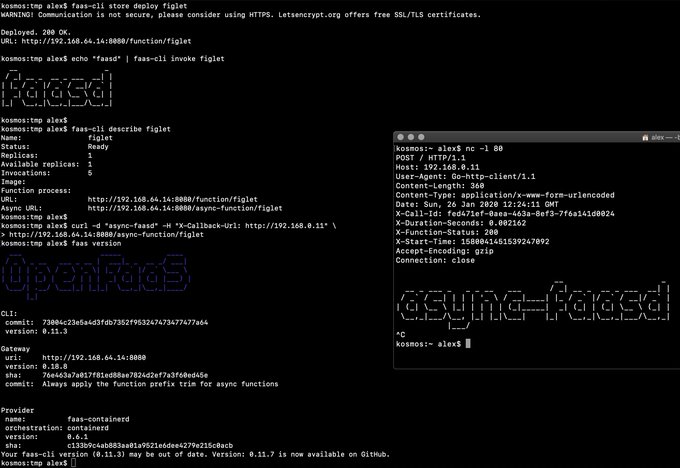

> Demo of faasd running in KVM

|

||||

|

||||

## What does faasd deploy?

|

||||

|

||||

* [faas-containerd](https://github.com/alexellis/faas-containerd/)

|

||||

* [Prometheus](https://github.com/prometheus/prometheus)

|

||||

* [the OpenFaaS gateway](https://github.com/openfaas/faas/tree/master/gateway)

|

||||

* faasd - itself, and its [faas-provider](https://github.com/openfaas/faas-provider) for containerd - CRUD for functions and services, implements the OpenFaaS REST API

|

||||

* [Prometheus](https://github.com/prometheus/prometheus) - for monitoring of services, metrics, scaling and dashboards

|

||||

* [OpenFaaS Gateway](https://github.com/openfaas/faas/tree/master/gateway) - the UI portal, CLI, and other OpenFaaS tooling can talk to this.

|

||||

* [OpenFaaS queue-worker for NATS](https://github.com/openfaas/nats-queue-worker) - run your invocations in the background without adding any code. See also: [asynchronous invocations](https://docs.openfaas.com/reference/triggers/#async-nats-streaming)

|

||||

* [NATS](https://nats.io) for asynchronous processing and queues

|

||||

|

||||

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) with faasd along with pre-packaged functions in the Function Store, or build your own with the template store.

|

||||

You'll also need:

|

||||

|

||||

### faas-containerd supports:

|

||||

* [CNI](https://github.com/containernetworking/plugins)

|

||||

* [containerd](https://github.com/containerd/containerd)

|

||||

* [runc](https://github.com/opencontainers/runc)

|

||||

|

||||

* `faas list`

|

||||

* `faas describe`

|

||||

* `faas deploy --update=true --replace=false`

|

||||

* `faas invoke`

|

||||

* `faas invoke --async`

|

||||

You can use the standard [faas-cli](https://github.com/openfaas/faas-cli) along with pre-packaged functions from *the Function Store*, or build your own using any OpenFaaS template.

|

||||

|

||||

Other operations are pending development in the provider.

|

||||

## Tutorials

|

||||

|

||||

### Pre-reqs

|

||||

### Get started on DigitalOcean, or any other IaaS

|

||||

|

||||

* Linux - ideally Ubuntu, which is used for testing

|

||||

* Installation steps as per [faas-containerd](https://github.com/alexellis/faas-containerd) for building and for development

|

||||

* [netns](https://github.com/genuinetools/netns/releases) binary in `$PATH`

|

||||

* [containerd v1.3.2](https://github.com/containerd/containerd)

|

||||

* [faas-cli](https://github.com/openfaas/faas-cli) (optional)

|

||||

If your IaaS supports `user_data` aka "cloud-init", then this guide is for you. If not, then checkout the approach and feel free to run each step manually.

|

||||

|

||||

* [Build a Serverless appliance with faasd](https://blog.alexellis.io/deploy-serverless-faasd-with-cloud-init/)

|

||||

|

||||

### Run locally on MacOS, Linux, or Windows with Multipass.run

|

||||

|

||||

* [Get up and running with your own faasd installation on your Mac/Ubuntu or Windows with cloud-config](https://gist.github.com/alexellis/6d297e678c9243d326c151028a3ad7b9)

|

||||

|

||||

### Get started on armhf / Raspberry Pi

|

||||

|

||||

You can run this tutorial on your Raspberry Pi, or adapt the steps for a regular Linux VM/VPS host.

|

||||

|

||||

* [faasd - lightweight Serverless for your Raspberry Pi](https://blog.alexellis.io/faasd-for-lightweight-serverless/)

|

||||

|

||||

### Terraform for DigitalOcean

|

||||

|

||||

Automate everything within < 60 seconds and get a public URL and IP address back. Customise as required, or adapt to your preferred cloud such as AWS EC2.

|

||||

|

||||

* [Provision faasd 0.8.1 on DigitalOcean with Terraform 0.12.0](docs/bootstrap/README.md)

|

||||

|

||||

* [Provision faasd on DigitalOcean with built-in TLS support](docs/bootstrap/digitalocean-terraform/README.md)

|

||||

|

||||

### A note on private repos / registries

|

||||

|

||||

To use private image repos, `~/.docker/config.json` needs to be copied to `/var/lib/faasd/.docker/config.json`.

|

||||

|

||||

If you'd like to set up your own private registry, [see this tutorial](https://blog.alexellis.io/get-a-tls-enabled-docker-registry-in-5-minutes/).

|

||||

|

||||

Beware that running `docker login` on MacOS and Windows may create an empty file with your credentials stored in the system helper.

|

||||

|

||||

Alternatively, use you can use the `registry-login` command from the OpenFaaS Cloud bootstrap tool (ofc-bootstrap):

|

||||

|

||||

```bash

|

||||

curl -sLSf https://raw.githubusercontent.com/openfaas-incubator/ofc-bootstrap/master/get.sh | sudo sh

|

||||

|

||||

ofc-bootstrap registry-login --username <your-registry-username> --password-stdin

|

||||

# (the enter your password and hit return)

|

||||

```

|

||||

The file will be created in `./credentials/`

|

||||

|

||||

### Logs for functions

|

||||

|

||||

You can view the logs of functions using `journalctl`:

|

||||

|

||||

```bash

|

||||

journalctl -t openfaas-fn:FUNCTION_NAME

|

||||

|

||||

|

||||

faas-cli store deploy figlet

|

||||

journalctl -t openfaas-fn:figlet -f &

|

||||

echo logs | faas-cli invoke figlet

|

||||

```

|

||||

|

||||

### Manual / developer instructions

|

||||

|

||||

See [here for manual / developer instructions](docs/DEV.md)

|

||||

|

||||

## Getting help

|

||||

|

||||

### Docs

|

||||

|

||||

The [OpenFaaS docs](https://docs.openfaas.com/) provide a wealth of information and are kept up to date with new features.

|

||||

|

||||

### Function and template store

|

||||

|

||||

For community functions see `faas-cli store --help`

|

||||

|

||||

For templates built by the community see: `faas-cli template store list`, you can also use the `dockerfile` template if you just want to migrate an existing service without the benefits of using a template.

|

||||

|

||||

### Workshop

|

||||

|

||||

[The OpenFaaS workshop](https://github.com/openfaas/workshop/) is a set of 12 self-paced labs and provides a great starting point

|

||||

|

||||

### Community support

|

||||

|

||||

An active community of almost 3000 users awaits you on Slack. Over 250 of those users are also contributors and help maintain the code.

|

||||

|

||||

* [Join Slack](https://slack.openfaas.io/)

|

||||

|

||||

## Backlog

|

||||

|

||||

### Supported operations

|

||||

|

||||

* `faas login`

|

||||

* `faas up`

|

||||

* `faas list`

|

||||

* `faas describe`

|

||||

* `faas deploy --update=true --replace=false`

|

||||

* `faas invoke --async`

|

||||

* `faas invoke`

|

||||

* `faas rm`

|

||||

* `faas store list/deploy/inspect`

|

||||

* `faas version`

|

||||

* `faas namespace`

|

||||

* `faas secret`

|

||||

* `faas logs`

|

||||

|

||||

Scale from and to zero is also supported. On a Dell XPS with a small, pre-pulled image unpausing an existing task took 0.19s and starting a task for a killed function took 0.39s. There may be further optimizations to be gained.

|

||||

|

||||

Other operations are pending development in the provider such as:

|

||||

|

||||

* `faas auth` - supported for Basic Authentication, but OAuth2 & OIDC require a patch

|

||||

|

||||

## Todo

|

||||

|

||||

Pending:

|

||||

|

||||

* [ ] Use CNI to create network namespaces and adapters

|

||||

* [ ] Add support for using container images in third-party public registries

|

||||

* [ ] Add support for using container images in private third-party registries

|

||||

* [ ] Monitor and restart any of the core components at runtime if the container stops

|

||||

* [ ] Bundle/package/automate installation of containerd - [see bootstrap from k3s](https://github.com/rancher/k3s)

|

||||

* [ ] Provide ufw rules / example for blocking access to everything but a reverse proxy to the gateway container

|

||||

* [ ] Provide [simple Caddyfile example](https://blog.alexellis.io/https-inlets-local-endpoints/) in the README showing how to expose the faasd proxy on port 80/443 with TLS

|

||||

|

||||

Done:

|

||||

|

||||

* [x] Provide a cloud-config.txt file for automated deployments of `faasd`

|

||||

* [x] Inject / manage IPs between core components for service to service communication - i.e. so Prometheus can scrape the OpenFaaS gateway - done via `/etc/hosts` mount

|

||||

* [x] Add queue-worker and NATS

|

||||

* [x] Create faasd.service and faas-containerd.service

|

||||

* [x] Create faasd.service and faasd-provider.service

|

||||

* [x] Self-install / create systemd service via `faasd install`

|

||||

* [x] Restart containers upon restart of faasd

|

||||

* [x] Clear / remove containers and tasks with SIGTERM / SIGINT

|

||||

* [x] Determine armhf/arm64 containers to run for gateway

|

||||

* [x] Configure `basic_auth` to protect the OpenFaaS gateway and faas-containerd HTTP API

|

||||

|

||||

## Hacking (build from source)

|

||||

|

||||

First run faas-containerd

|

||||

|

||||

```sh

|

||||

cd $GOPATH/src/github.com/alexellis/faas-containerd

|

||||

|

||||

# You'll need to install containerd and its pre-reqs first

|

||||

# https://github.com/alexellis/faas-containerd/

|

||||

|

||||

sudo ./faas-containerd

|

||||

```

|

||||

|

||||

Then run faasd, which brings up the gateway and Prometheus as containers

|

||||

|

||||

```sh

|

||||

cd $GOPATH/src/github.com/alexellis/faasd

|

||||

go build

|

||||

|

||||

# Install with systemd

|

||||

# sudo ./faasd install

|

||||

|

||||

# Or run interactively

|

||||

# sudo ./faasd up

|

||||

```

|

||||

|

||||

### Build and run (binaries)

|

||||

|

||||

```sh

|

||||

# For x86_64

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.3.1/faasd" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# armhf

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.3.1/faasd-armhf" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

|

||||

# arm64

|

||||

sudo curl -fSLs "https://github.com/alexellis/faasd/releases/download/0.3.1/faasd-arm64" \

|

||||

-o "/usr/local/bin/faasd" \

|

||||

&& sudo chmod a+x "/usr/local/bin/faasd"

|

||||

```

|

||||

|

||||

### At run-time

|

||||

|

||||

Look in `hosts` in the current working folder to get the IP for the gateway or Prometheus

|

||||

|

||||

```sh

|

||||

127.0.0.1 localhost

|

||||

172.19.0.1 faas-containerd

|

||||

172.19.0.2 prometheus

|

||||

|

||||

172.19.0.3 gateway

|

||||

172.19.0.4 nats

|

||||

172.19.0.5 queue-worker

|

||||

```

|

||||

|

||||

Since faas-containerd uses containerd heavily it is not running as a container, but as a stand-alone process. Its port is available via the bridge interface, i.e. netns0.

|

||||

|

||||

* Prometheus will run on the Prometheus IP plus port 8080 i.e. http://172.19.0.2:9090/targets

|

||||

|

||||

* faas-containerd runs on 172.19.0.1:8081

|

||||

|

||||

* Now go to the gateway's IP address as shown above on port 8080, i.e. http://172.19.0.3:8080 - you can also use this address to deploy OpenFaaS Functions via the `faas-cli`.

|

||||

|

||||

* basic-auth

|

||||

|

||||

You will then need to get the basic-auth password, it is written to `$GOPATH/src/github.com/alexellis/faasd/basic-auth-password` if you followed the above instructions.

|

||||

The default Basic Auth username is `admin`, which is written to `$GOPATH/src/github.com/alexellis/faasd/basic-auth-user`, if you wish to use a non-standard user then create this file and add your username (no newlines or other characters)

|

||||

|

||||

#### Installation with systemd

|

||||

|

||||

* `faasd install` - install faasd and containerd with systemd, run in `$GOPATH/src/github.com/alexellis/faasd`

|

||||

* `journalctl -u faasd` - faasd systemd logs

|

||||

* `journalctl -u faas-containerd` - faas-containerd systemd logs

|

||||

|

||||

### Appendix

|

||||

|

||||

Removing containers:

|

||||

|

||||

```sh

|

||||

echo faas-containerd gateway prometheus | xargs sudo ctr task rm -f

|

||||

|

||||

echo faas-containerd gateway prometheus | xargs sudo ctr container rm

|

||||

|

||||

echo faas-containerd gateway prometheus | xargs sudo ctr snapshot rm

|

||||

```

|

||||

|

||||

## Links

|

||||

|

||||

https://github.com/renatofq/ctrofb/blob/31968e4b4893f3603e9998f21933c4131523bb5d/cmd/network.go

|

||||

|

||||

https://github.com/renatofq/catraia/blob/c4f62c86bddbfadbead38cd2bfe6d920fba26dce/catraia-net/network.go

|

||||

|

||||

https://github.com/containernetworking/plugins

|

||||

|

||||

https://github.com/containerd/go-cni

|

||||

* [x] Configure `basic_auth` to protect the OpenFaaS gateway and faasd-provider HTTP API

|

||||

* [x] Setup custom working directory for faasd `/var/lib/faasd/`

|

||||

* [x] Use CNI to create network namespaces and adapters

|

||||

|

||||

|

||||

28

cloud-config.txt

Normal file

28

cloud-config.txt

Normal file

@ -0,0 +1,28 @@

|

||||

#cloud-config

|

||||

ssh_authorized_keys:

|

||||

- ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC8Q/aUYUr3P1XKVucnO9mlWxOjJm+K01lHJR90MkHC9zbfTqlp8P7C3J26zKAuzHXOeF+VFxETRr6YedQKW9zp5oP7sN+F2gr/pO7GV3VmOqHMV7uKfyUQfq7H1aVzLfCcI7FwN2Zekv3yB7kj35pbsMa1Za58aF6oHRctZU6UWgXXbRxP+B04DoVU7jTstQ4GMoOCaqYhgPHyjEAS3DW0kkPW6HzsvJHkxvVcVlZ/wNJa1Ie/yGpzOzWIN0Ol0t2QT/RSWOhfzO1A2P0XbPuZ04NmriBonO9zR7T1fMNmmtTuK7WazKjQT3inmYRAqU6pe8wfX8WIWNV7OowUjUsv alex@alexr.local

|

||||

|

||||

package_update: true

|

||||

|

||||

packages:

|

||||

- runc

|

||||

|

||||

runcmd:

|

||||

- curl -sLSf https://github.com/containerd/containerd/releases/download/v1.3.2/containerd-1.3.2.linux-amd64.tar.gz > /tmp/containerd.tar.gz && tar -xvf /tmp/containerd.tar.gz -C /usr/local/bin/ --strip-components=1

|

||||

- curl -SLfs https://raw.githubusercontent.com/containerd/containerd/v1.3.2/containerd.service | tee /etc/systemd/system/containerd.service

|

||||

- systemctl daemon-reload && systemctl start containerd

|

||||

- systemctl enable containerd

|

||||

- /sbin/sysctl -w net.ipv4.conf.all.forwarding=1

|

||||

- mkdir -p /opt/cni/bin

|

||||

- curl -sSL https://github.com/containernetworking/plugins/releases/download/v0.8.5/cni-plugins-linux-amd64-v0.8.5.tgz | tar -xz -C /opt/cni/bin

|

||||

- mkdir -p /go/src/github.com/openfaas/

|

||||

- cd /go/src/github.com/openfaas/ && git clone https://github.com/openfaas/faasd && git checkout 0.9.0

|

||||

- curl -fSLs "https://github.com/openfaas/faasd/releases/download/0.9.0/faasd" --output "/usr/local/bin/faasd" && chmod a+x "/usr/local/bin/faasd"

|

||||

- cd /go/src/github.com/openfaas/faasd/ && /usr/local/bin/faasd install

|

||||

- systemctl status -l containerd --no-pager

|

||||

- journalctl -u faasd-provider --no-pager

|

||||

- systemctl status -l faasd-provider --no-pager

|

||||

- systemctl status -l faasd --no-pager

|

||||

- curl -sSLf https://cli.openfaas.com | sh

|

||||

- sleep 60 && journalctl -u faasd --no-pager

|

||||

- cat /var/lib/faasd/secrets/basic-auth-password | /usr/local/bin/faas-cli login --password-stdin

|

||||

60

cmd/collect.go

Normal file

60

cmd/collect.go

Normal file

@ -0,0 +1,60 @@

|

||||

package cmd

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"context"

|

||||

"fmt"

|

||||

"io"

|

||||

"sync"

|

||||

|

||||

"github.com/containerd/containerd/runtime/v2/logging"

|

||||

"github.com/coreos/go-systemd/journal"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

func CollectCommand() *cobra.Command {

|

||||

return collectCmd

|

||||

}

|

||||

|

||||

var collectCmd = &cobra.Command{

|

||||

Use: "collect",

|

||||

Short: "Collect logs to the journal",

|

||||

RunE: runCollect,

|

||||

}

|

||||

|

||||

func runCollect(_ *cobra.Command, _ []string) error {

|

||||

logging.Run(logStdio)

|

||||

return nil

|

||||

}

|

||||

|

||||

// logStdio copied from

|

||||

// https://github.com/containerd/containerd/pull/3085

|

||||

// https://github.com/stellarproject/orbit

|

||||

func logStdio(ctx context.Context, config *logging.Config, ready func() error) error {

|

||||

// construct any log metadata for the container

|

||||

vars := map[string]string{

|

||||

"SYSLOG_IDENTIFIER": fmt.Sprintf("%s:%s", config.Namespace, config.ID),

|

||||

}

|

||||

var wg sync.WaitGroup

|

||||

wg.Add(2)

|

||||

// forward both stdout and stderr to the journal

|

||||

go copy(&wg, config.Stdout, journal.PriInfo, vars)

|

||||

go copy(&wg, config.Stderr, journal.PriErr, vars)

|

||||

// signal that we are ready and setup for the container to be started

|

||||

if err := ready(); err != nil {

|

||||

return err

|

||||

}

|

||||

wg.Wait()

|

||||

return nil

|

||||

}

|

||||

|

||||

func copy(wg *sync.WaitGroup, r io.Reader, pri journal.Priority, vars map[string]string) {

|

||||

defer wg.Done()

|

||||

s := bufio.NewScanner(r)

|

||||

for s.Scan() {

|

||||

if s.Err() != nil {

|

||||

return

|

||||

}

|

||||

journal.Send(s.Text(), pri, vars)

|

||||

}

|

||||

}

|

||||

@ -2,10 +2,12 @@ package cmd

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"io"

|

||||

"os"

|

||||

"path"

|

||||

|

||||

systemd "github.com/alexellis/faasd/pkg/systemd"

|

||||

systemd "github.com/openfaas/faasd/pkg/systemd"

|

||||

"github.com/pkg/errors"

|

||||

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

@ -16,29 +18,52 @@ var installCmd = &cobra.Command{

|

||||

RunE: runInstall,

|

||||

}

|

||||

|

||||

const workingDirectoryPermission = 0644

|

||||

|

||||

const faasdwd = "/var/lib/faasd"

|

||||

|

||||

const faasdProviderWd = "/var/lib/faasd-provider"

|

||||

|

||||

func runInstall(_ *cobra.Command, _ []string) error {

|

||||

|

||||

err := binExists("/usr/local/bin/", "faas-containerd")

|

||||

if err := ensureWorkingDir(path.Join(faasdwd, "secrets")); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if err := ensureWorkingDir(faasdProviderWd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if basicAuthErr := makeBasicAuthFiles(path.Join(faasdwd, "secrets")); basicAuthErr != nil {

|

||||

return errors.Wrap(basicAuthErr, "cannot create basic-auth-* files")

|

||||

}

|

||||

|

||||

if err := cp("docker-compose.yaml", faasdwd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if err := cp("prometheus.yml", faasdwd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if err := cp("resolv.conf", faasdwd); err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

err := binExists("/usr/local/bin/", "faasd")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

err = binExists("/usr/local/bin/", "faasd")

|

||||

err = systemd.InstallUnit("faasd-provider", map[string]string{

|

||||

"Cwd": faasdProviderWd,

|

||||

"SecretMountPath": path.Join(faasdwd, "secrets")})

|

||||

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

err = binExists("/usr/local/bin/", "netns")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

err = systemd.InstallUnit("faas-containerd")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

err = systemd.InstallUnit("faasd")

|

||||

err = systemd.InstallUnit("faasd", map[string]string{"Cwd": faasdwd})

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@ -48,7 +73,7 @@ func runInstall(_ *cobra.Command, _ []string) error {

|

||||

return err

|

||||

}

|

||||

|

||||

err = systemd.Enable("faas-containerd")

|

||||

err = systemd.Enable("faasd-provider")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@ -58,7 +83,7 @@ func runInstall(_ *cobra.Command, _ []string) error {

|

||||

return err

|

||||

}

|

||||

|

||||

err = systemd.Start("faas-containerd")

|

||||

err = systemd.Start("faasd-provider")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@ -68,6 +93,9 @@ func runInstall(_ *cobra.Command, _ []string) error {

|

||||

return err

|

||||

}

|

||||

|

||||

fmt.Println(`Login with:

|

||||

sudo cat /var/lib/faasd/secrets/basic-auth-password | faas-cli login -s`)

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

@ -78,3 +106,33 @@ func binExists(folder, name string) error {

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func ensureWorkingDir(folder string) error {

|

||||

if _, err := os.Stat(folder); err != nil {

|

||||

err = os.MkdirAll(folder, workingDirectoryPermission)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func cp(source, destFolder string) error {

|

||||

file, err := os.Open(source)

|

||||

if err != nil {

|

||||

return err

|

||||

|

||||

}

|

||||

defer file.Close()

|

||||

|

||||

out, err := os.Create(path.Join(destFolder, source))

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

defer out.Close()

|

||||

|

||||

_, err = io.Copy(out, file)

|

||||

|

||||

return err

|

||||

}

|

||||

|

||||

116

cmd/provider.go

Normal file

116

cmd/provider.go

Normal file

@ -0,0 +1,116 @@

|

||||

package cmd

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"io/ioutil"

|

||||

"log"

|

||||

"net/http"

|

||||

"os"

|

||||

"path"

|

||||

|

||||

"github.com/containerd/containerd"

|

||||

bootstrap "github.com/openfaas/faas-provider"

|

||||

"github.com/openfaas/faas-provider/logs"

|

||||

"github.com/openfaas/faas-provider/proxy"

|

||||

"github.com/openfaas/faas-provider/types"

|

||||

"github.com/openfaas/faasd/pkg/cninetwork"

|

||||

faasdlogs "github.com/openfaas/faasd/pkg/logs"

|

||||

"github.com/openfaas/faasd/pkg/provider/config"

|

||||

"github.com/openfaas/faasd/pkg/provider/handlers"

|

||||

"github.com/spf13/cobra"

|

||||

)

|

||||

|

||||

func makeProviderCmd() *cobra.Command {

|

||||

var command = &cobra.Command{

|

||||

Use: "provider",

|

||||

Short: "Run the faasd-provider",

|

||||

}

|

||||

|

||||

command.Flags().String("pull-policy", "Always", `Set to "Always" to force a pull of images upon deployment, or "IfNotPresent" to try to use a cached image.`)

|

||||

|

||||

command.RunE = func(_ *cobra.Command, _ []string) error {

|

||||

|

||||

pullPolicy, flagErr := command.Flags().GetString("pull-policy")

|

||||

if flagErr != nil {

|

||||

return flagErr

|

||||

}

|

||||

|

||||

alwaysPull := false

|

||||

if pullPolicy == "Always" {

|

||||

alwaysPull = true

|

||||

}

|

||||

|

||||

config, providerConfig, err := config.ReadFromEnv(types.OsEnv{})

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

log.Printf("faasd-provider starting..\tService Timeout: %s\n", config.WriteTimeout.String())

|

||||

printVersion()

|

||||

|

||||

wd, err := os.Getwd()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

writeHostsErr := ioutil.WriteFile(path.Join(wd, "hosts"),

|

||||

[]byte(`127.0.0.1 localhost`), workingDirectoryPermission)

|

||||

|

||||

if writeHostsErr != nil {

|

||||

return fmt.Errorf("cannot write hosts file: %s", writeHostsErr)

|

||||

}

|

||||

|

||||

writeResolvErr := ioutil.WriteFile(path.Join(wd, "resolv.conf"),

|

||||

[]byte(`nameserver 8.8.8.8`), workingDirectoryPermission)

|

||||

|

||||

if writeResolvErr != nil {

|

||||

return fmt.Errorf("cannot write resolv.conf file: %s", writeResolvErr)

|

||||

}

|

||||

|

||||

cni, err := cninetwork.InitNetwork()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

client, err := containerd.New(providerConfig.Sock)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

defer client.Close()

|

||||

|

||||

invokeResolver := handlers.NewInvokeResolver(client)

|

||||

|

||||

userSecretPath := path.Join(wd, "secrets")

|

||||

|

||||

bootstrapHandlers := types.FaaSHandlers{

|

||||

FunctionProxy: proxy.NewHandlerFunc(*config, invokeResolver),

|

||||

DeleteHandler: handlers.MakeDeleteHandler(client, cni),

|

||||

DeployHandler: handlers.MakeDeployHandler(client, cni, userSecretPath, alwaysPull),

|

||||

FunctionReader: handlers.MakeReadHandler(client),

|

||||

ReplicaReader: handlers.MakeReplicaReaderHandler(client),

|

||||

ReplicaUpdater: handlers.MakeReplicaUpdateHandler(client, cni),

|

||||

UpdateHandler: handlers.MakeUpdateHandler(client, cni, userSecretPath, alwaysPull),

|

||||

HealthHandler: func(w http.ResponseWriter, r *http.Request) {},

|

||||

InfoHandler: handlers.MakeInfoHandler(Version, GitCommit),

|

||||

ListNamespaceHandler: listNamespaces(),

|

||||

SecretHandler: handlers.MakeSecretHandler(client, userSecretPath),

|

||||

LogHandler: logs.NewLogHandlerFunc(faasdlogs.New(), config.ReadTimeout),

|

||||

}

|

||||

|

||||

log.Printf("Listening on TCP port: %d\n", *config.TCPPort)

|

||||

bootstrap.Serve(&bootstrapHandlers, config)

|

||||

return nil

|

||||

}

|

||||

|

||||

return command

|

||||

}

|

||||

|

||||

func listNamespaces() func(w http.ResponseWriter, r *http.Request) {

|

||||

return func(w http.ResponseWriter, r *http.Request) {

|

||||

list := []string{""}

|

||||

out, _ := json.Marshal(list)

|

||||

w.Write(out)

|

||||

}

|

||||

}

|

||||

16

cmd/root.go

16

cmd/root.go

@ -14,6 +14,12 @@ func init() {

|

||||

rootCommand.AddCommand(versionCmd)

|

||||

rootCommand.AddCommand(upCmd)

|

||||

rootCommand.AddCommand(installCmd)

|

||||

rootCommand.AddCommand(makeProviderCmd())

|

||||

rootCommand.AddCommand(collectCmd)

|

||||

}

|

||||

|

||||

func RootCommand() *cobra.Command {

|

||||

return rootCommand

|

||||

}

|

||||

|

||||

var (

|

||||

@ -63,11 +69,11 @@ var versionCmd = &cobra.Command{

|

||||

func parseBaseCommand(_ *cobra.Command, _ []string) {

|

||||

printLogo()

|

||||

|

||||

fmt.Printf(

|

||||

`faasd

|

||||

Commit: %s

|

||||

Version: %s

|

||||

`, GitCommit, GetVersion())

|

||||

printVersion()

|

||||

}

|

||||

|

||||

func printVersion() {

|

||||

fmt.Printf("faasd version: %s\tcommit: %s\n", GetVersion(), GitCommit)

|

||||

}

|

||||

|

||||

func printLogo() {

|

||||

|

||||

214

cmd/up.go

214

cmd/up.go

@ -13,46 +13,55 @@ import (

|

||||

"time"

|

||||

|

||||

"github.com/pkg/errors"

|

||||

|

||||

"github.com/alexellis/faasd/pkg"

|

||||

"github.com/alexellis/k3sup/pkg/env"

|

||||

"github.com/sethvargo/go-password/password"

|

||||

"github.com/spf13/cobra"

|

||||

flag "github.com/spf13/pflag"

|

||||

|

||||

"github.com/openfaas/faasd/pkg"

|

||||

)

|

||||

|

||||

// upConfig are the CLI flags used by the `faasd up` command to deploy the faasd service

|

||||

type upConfig struct {

|

||||

// composeFilePath is the path to the compose file specifying the faasd service configuration

|

||||

// See https://compose-spec.io/ for more information about the spec,

|

||||

//

|

||||

// currently, this must be the name of a file in workingDir, which is set to the value of

|

||||

// `faasdwd = /var/lib/faasd`

|

||||

composeFilePath string

|

||||

|

||||

// working directory to assume the compose file is in, should be faasdwd.

|

||||

// this is not configurable but may be in the future.

|

||||

workingDir string

|

||||

}

|

||||

|

||||

func init() {

|

||||

configureUpFlags(upCmd.Flags())

|

||||

}

|

||||

|

||||

var upCmd = &cobra.Command{

|

||||

Use: "up",

|

||||

Short: "Start faasd",

|

||||

RunE: runUp,

|

||||

}

|

||||

|

||||

func runUp(_ *cobra.Command, _ []string) error {

|

||||

func runUp(cmd *cobra.Command, _ []string) error {

|

||||

|

||||

clientArch, clientOS := env.GetClientArch()

|

||||

printVersion()

|

||||

|

||||

if clientOS != "Linux" {

|

||||

return fmt.Errorf("You can only use faasd on Linux")

|

||||

}

|

||||

clientSuffix := ""

|

||||

switch clientArch {

|

||||

case "x86_64":

|

||||

clientSuffix = ""

|

||||

break

|

||||

case "armhf":

|

||||

case "armv7l":

|

||||

clientSuffix = "-armhf"

|

||||

break

|

||||

case "arm64":

|

||||

case "aarch64":

|

||||

clientSuffix = "-arm64"

|

||||

cfg, err := parseUpFlags(cmd)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

authFileErr := errors.Wrap(makeBasicAuthFiles(), "Could not create gateway auth files")

|

||||

if authFileErr != nil {

|

||||

return authFileErr

|

||||

services, err := loadServiceDefinition(cfg)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

services := makeServiceDefinitions(clientSuffix)

|

||||

basicAuthErr := makeBasicAuthFiles(path.Join(cfg.workingDir, "secrets"))

|

||||

if basicAuthErr != nil {

|

||||

return errors.Wrap(basicAuthErr, "cannot create basic-auth-* files")

|

||||

}

|

||||

|

||||

start := time.Now()

|

||||

supervisor, err := pkg.NewSupervisor("/run/containerd/containerd.sock")

|